Don't make Claude do the same work twice

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

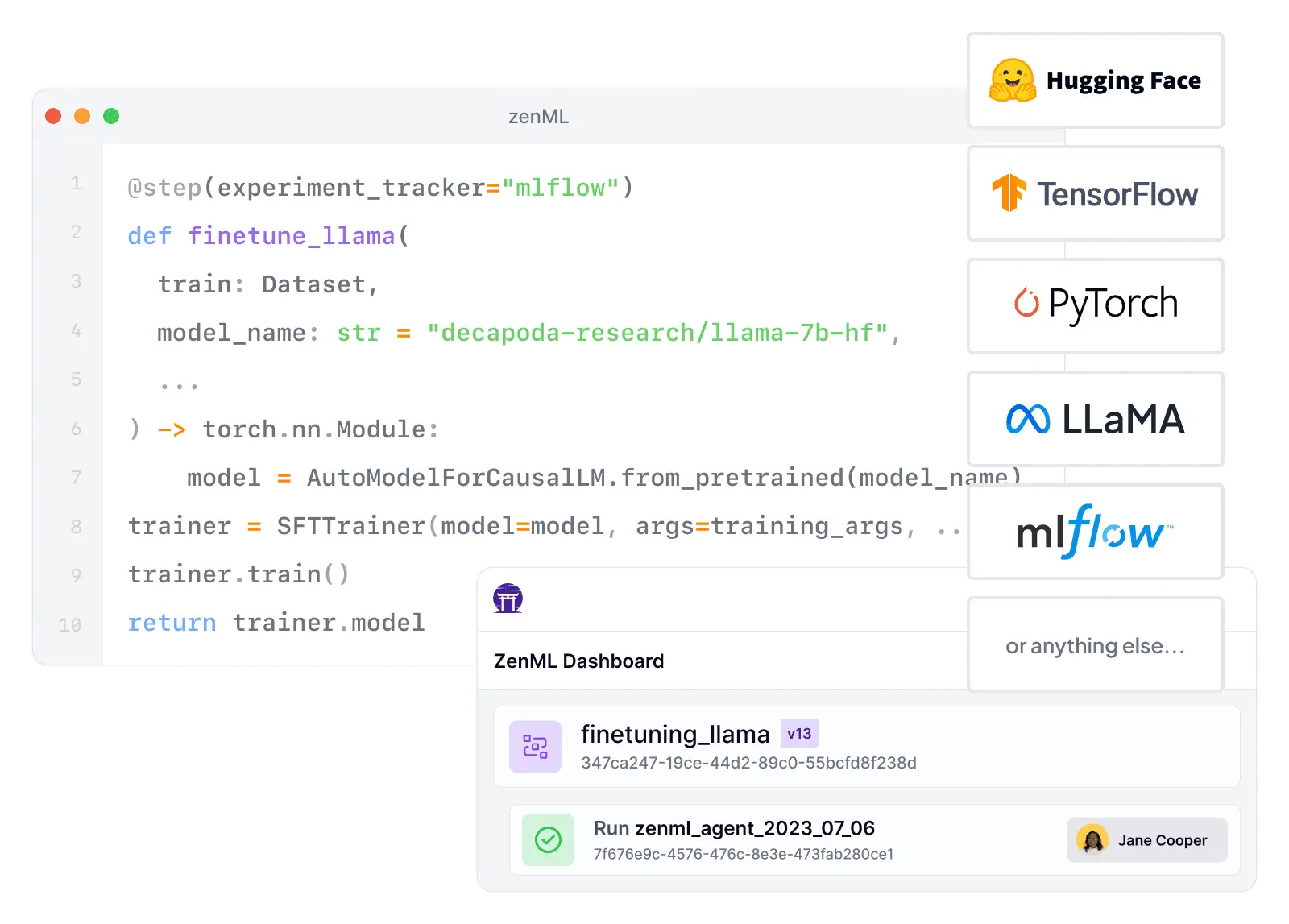

If you're using Argo Workflows for training and evaluation jobs, you already know it's great at orchestrating containers on Kubernetes. What it doesn't give you is the ML lifecycle layer: experiment tracking, artifact lineage, and reproducible, comparable pipeline runs. ZenML brings an ML-native layer, so your team spends less time wiring tools together and more time shipping reliable models.

“ZenML has proven to be a critical asset in our machine learning toolbox, and we are excited to continue leveraging its capabilities to drive ADEO's machine learning initiatives to new heights”

François Serra

ML Engineer / ML Ops / ML Solution architect at ADEO Services

Feature-by-feature comparison

| Workflow Orchestration | ML-native pipelines with portable execution via stacks, while still supporting Kubernetes-based orchestration when needed | Kubernetes-native workflow engine with mature DAG/steps execution, retries, scheduling, and strong operational controls on K8s |

| Integration Flexibility | Composable stack with 50+ MLOps integrations — swap orchestrators, trackers, and deployers without code changes | Runs virtually any containerized tool, but integrations are DIY — teams wire credentials, storage, and conventions manually |

| Vendor Lock-In | Cloud-agnostic by design — stacks make it easy to switch infrastructure providers and tools as needs change | Runs on any Kubernetes cluster and is CNCF-governed open source — lock-in is primarily to Kubernetes itself, not a specific cloud |

| Setup Complexity | pip install zenml — start locally and scale up by swapping stacks, avoiding immediate Kubernetes dependency | Requires Kubernetes cluster plus Argo installation, RBAC config, artifact repository, and optional database for full value |

| Learning Curve | Python-first and ML-first — reduces cognitive load for ML engineers who don't want to become Kubernetes experts | Assumes Kubernetes fluency (CRDs, pods, namespaces, service accounts, storage) — ML teams often need platform help to adopt it |

| Scalability | Delegates compute to scalable backends — Kubernetes, Spark, cloud ML services — for unlimited horizontal scaling | Built for parallel job orchestration on Kubernetes with parallelism limits, retries, and workflow offloading for large DAGs |

| Cost Model | Open-source core is free — pay only for your own infrastructure, with optional managed cloud for enterprise features | Apache-2.0 and CNCF-governed with no per-seat or per-run pricing — costs are Kubernetes infrastructure and operations |

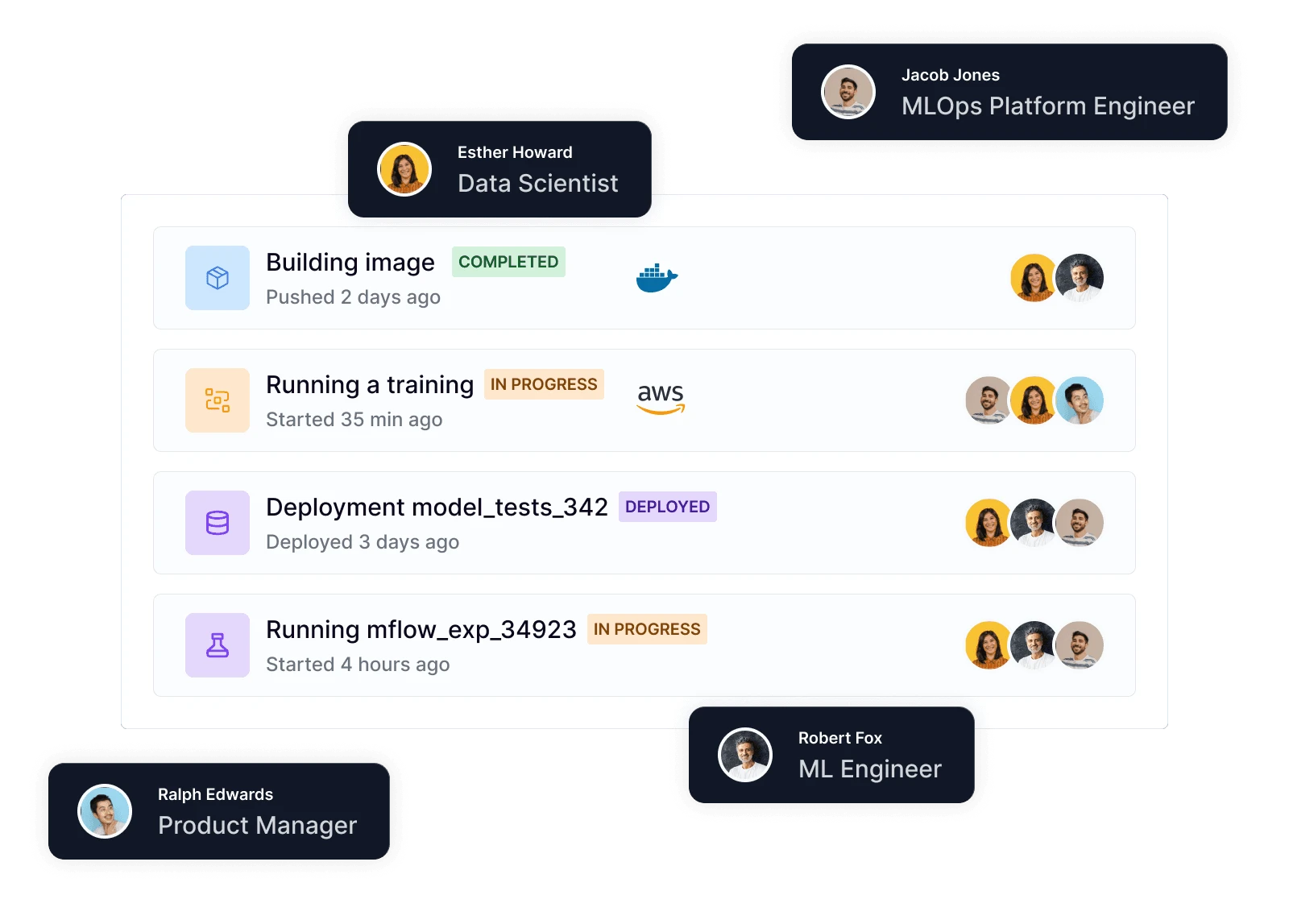

| Collaboration | Code-native collaboration through Git, CI/CD, and code review — ZenML Pro adds RBAC, workspaces, and team dashboards | UI and SSO support for multi-user setups, but collaboration is centered on workflow execution and logs — not ML experiment sharing |

| ML Frameworks | Use any Python ML framework — TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM — with native materializers and tracking | Framework-agnostic at the runtime level — if it runs in a container on Kubernetes, Argo can orchestrate it |

| Monitoring | Integrates Evidently, WhyLogs, and other monitoring tools as stack components for automated drift detection and alerting | Monitors workflow execution well (statuses, logs, Prometheus metrics), but no production model monitoring or ML drift detection built in |

| Governance | ZenML Pro provides RBAC, SSO, workspaces, and audit trails — self-hosted option keeps all data in your own infrastructure | Kubernetes-centric governance with namespacing, RBAC, and workflow archiving — but ML-specific audit trails require external systems |

| Experiment Tracking | Native metadata tracking plus seamless integration with MLflow, Weights & Biases, Neptune, and Comet for rich experiment comparison | No built-in experiment tracking — teams embed MLflow or W&B inside containers and standardize conventions manually |

| Reproducibility | Automatic artifact versioning, code-to-Git linking, and containerized execution guarantee reproducible pipeline runs | Workflows are rerunnable, but reproducibility depends on pinned containers, data versioning, and discipline — not enforced by default |

| Auto-Retraining | Schedule pipelines via any orchestrator or use ZenML Pro event triggers for drift-based automated retraining workflows | CronWorkflows and webhook triggers enable automated retraining runs — model promotion and registry logic left to your stack |

Code comparison

from zenml import pipeline, step, Model

from zenml.integrations.mlflow.steps import (

mlflow_model_deployer_step,

)

import pandas as pd

from sklearn.ensemble import RandomForestRegressor

from sklearn.metrics import mean_squared_error

import numpy as np

@step

def ingest_data() -> pd.DataFrame:

return pd.read_csv("data/dataset.csv")

@step

def train_model(df: pd.DataFrame) -> RandomForestRegressor:

X, y = df.drop("target", axis=1), df["target"]

model = RandomForestRegressor(n_estimators=100)

model.fit(X, y)

return model

@step

def evaluate(model: RandomForestRegressor, df: pd.DataFrame) -> float:

X, y = df.drop("target", axis=1), df["target"]

preds = model.predict(X)

return float(np.sqrt(mean_squared_error(y, preds)))

@step

def check_drift(df: pd.DataFrame) -> bool:

# Plug in Evidently, Great Expectations, etc.

return detect_drift(df)

@pipeline(model=Model(name="my_model"))

def ml_pipeline():

df = ingest_data()

model = train_model(df)

rmse = evaluate(model, df)

drift = check_drift(df)

# Runs on any orchestrator, logs to MLflow,

# tracks artifacts, and triggers retraining — all

# in one portable, version-controlled pipeline

ml_pipeline()from hera.workflows import Steps, Workflow, WorkflowsService, script

@script()

def preprocess() -> str:

print("s3://ml-artifacts/datasets/processed.csv")

@script()

def train(data_uri: str) -> str:

print("s3://ml-artifacts/models/model.pkl")

@script()

def evaluate(model_uri: str):

print(f"evaluating {model_uri}")

with Workflow(

generate_name="ml-train-eval-",

entrypoint="steps",

namespace="argo",

workflows_service=WorkflowsService(

host="https://localhost:2746"

),

) as w:

with Steps(name="steps"):

data = preprocess()

model = train(arguments={"data_uri": data.result})

evaluate(arguments={"model_uri": model.result})

w.create()

# Requires Kubernetes cluster + Argo installation.

# No built-in experiment tracking, model registry,

# artifact lineage, or reproducibility guarantees.

# ML lifecycle layers must be built separately.

ZenML is fully open-source and vendor-neutral, letting you avoid the significant licensing costs and platform lock-in of proprietary enterprise platforms. Your pipelines remain portable across any infrastructure, from local development to multi-cloud production.

ZenML offers a pip-installable, Python-first approach that lets you start locally and scale later. No enterprise deployment, platform operators, or Kubernetes clusters required to begin — build production-grade ML pipelines in minutes, not weeks.

ZenML's composable stack lets you choose your own orchestrator, experiment tracker, artifact store, and deployer. Swap components freely without re-platforming — your pipelines adapt to your toolchain, not the other way around.

Expand Your Knowledge

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

LangGraph keeps graph state, threads, and interrupts. Kitaru adds the durable workflow around the graph call — replay boundaries, durable waits, and inspectable runs.

The OpenAI Agents SDK stays the harness; Kitaru adds the runtime around it — durable workflow waits, replay boundaries, and inspectable execution history.