Don't make Claude do the same work twice

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

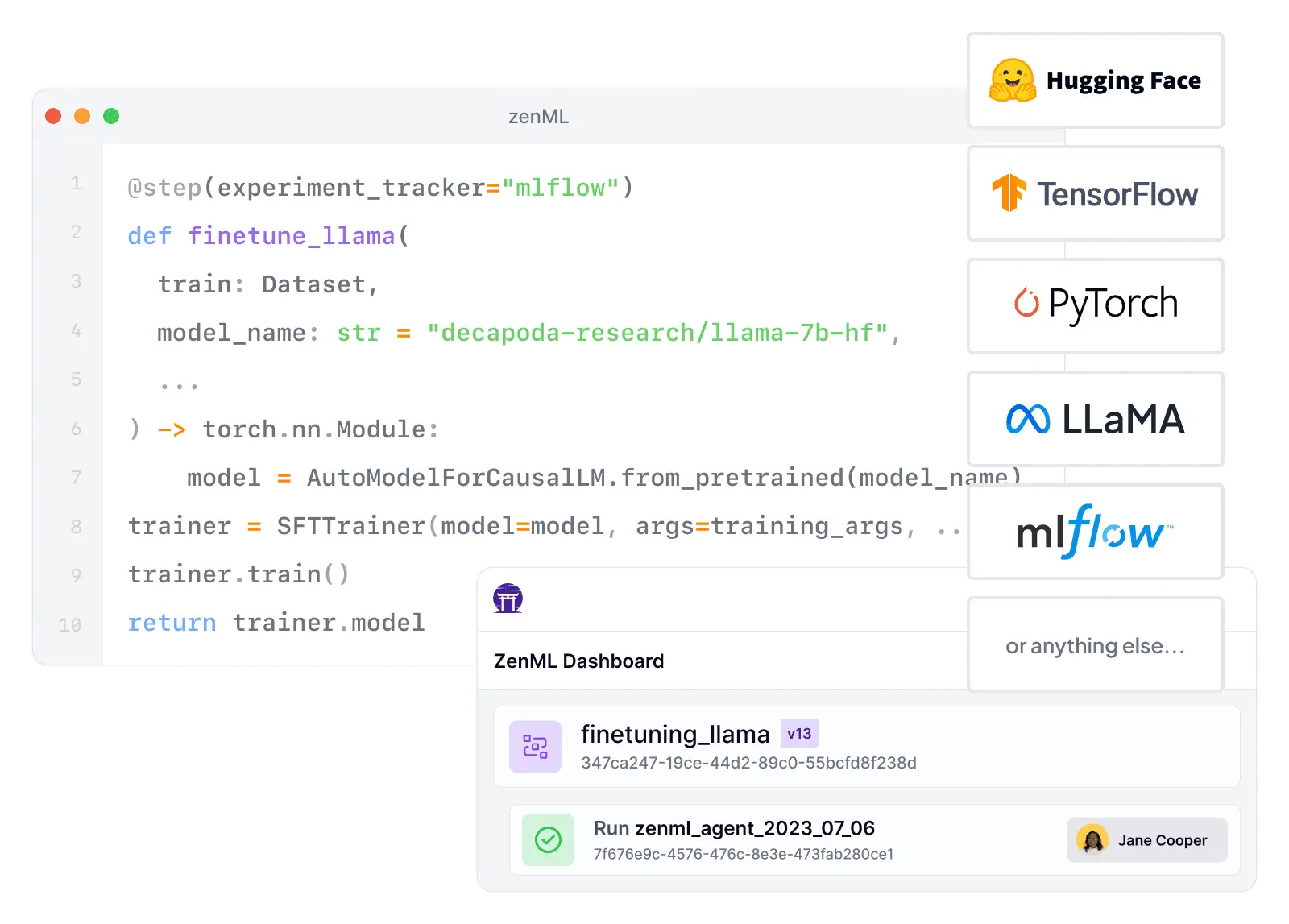

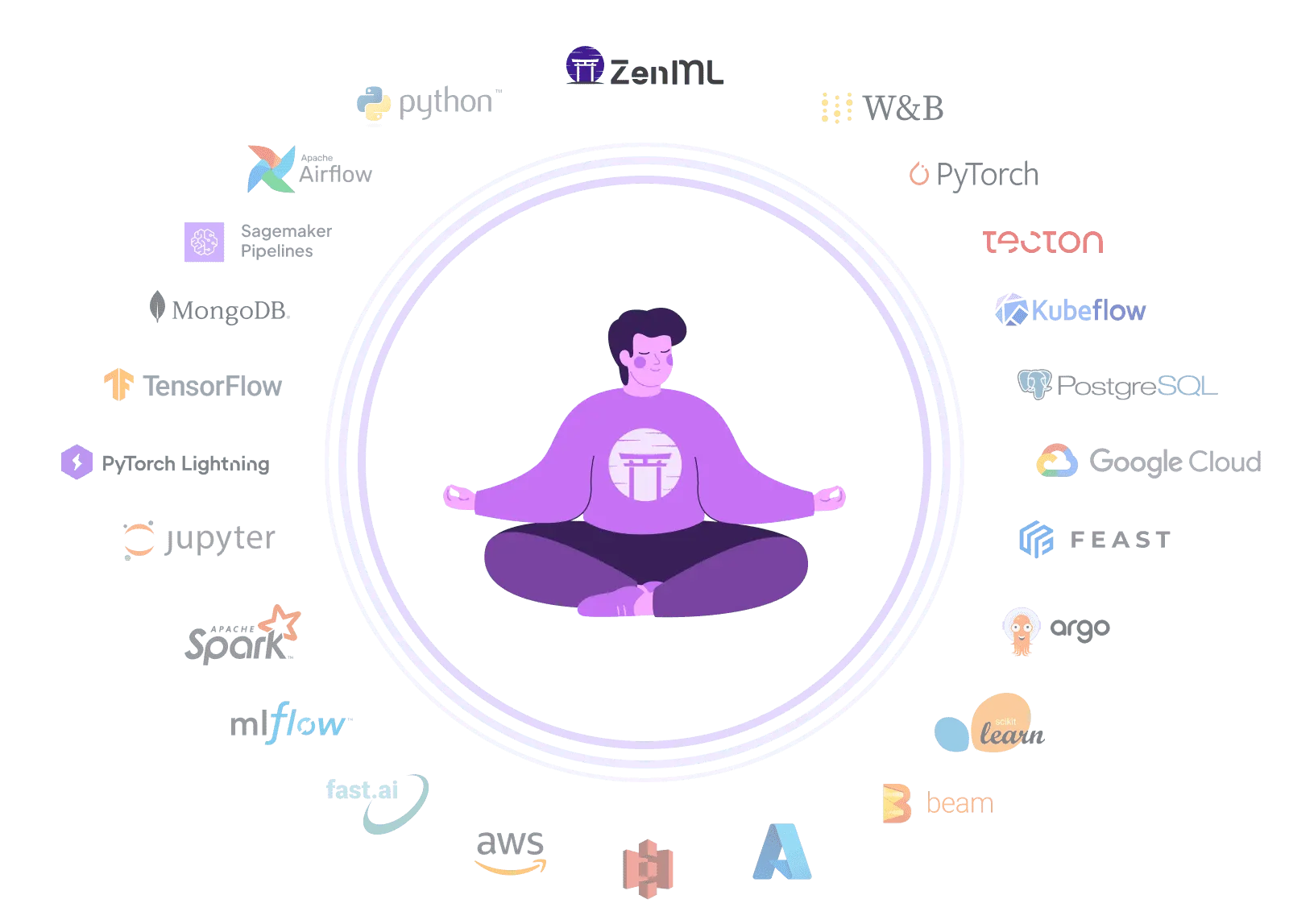

Langfuse helps you understand what your LLM app is doing in production - traces, prompt versions, evaluations, and cost analytics. ZenML helps you build and ship the system behind it: portable pipelines that train, test, deploy, and retrain across clouds and orchestrators. They cover different parts of the AI stack. Use Langfuse to improve runtime quality, and ZenML to operationalize the lifecycle.

“ZenML has proven to be a critical asset in our machine learning toolbox, and we are excited to continue leveraging its capabilities to drive ADEO's machine learning initiatives to new heights”

François Serra

ML Engineer / ML Ops / ML Solution architect at ADEO Services

Feature-by-feature comparison

| Workflow Orchestration | ZenML is built around defining and executing portable ML/AI pipelines across orchestrators and backends, with lifecycle primitives (runs, artifacts, lineage). | Langfuse instruments and analyzes LLM application behavior (traces/evals/prompts), but does not provide native DAG/pipeline execution. |

| Integration Flexibility | ZenML's stack architecture is designed to swap infrastructure components (orchestrators, artifact stores, registries, trackers) without rewriting pipeline code. | Langfuse integrates deeply with LLM ecosystems (OpenTelemetry, OpenAI, LangChain) but is not a general-purpose integration hub for the broader ML toolchain. |

| Vendor Lock-In | ZenML is cloud-agnostic; even the managed control plane keeps artifacts/data in your infrastructure, reducing lock-in. | Langfuse is open source and supports self-hosting; teams can run the same product stack themselves instead of relying on SaaS. |

| Setup Complexity | ZenML can start local and scale to production stacks, but configuring orchestrators and artifact stores adds initial setup steps. | Getting started is straightforward via Langfuse Cloud (sign up, add SDK, see traces); self-hosting also has a guided Docker Compose path. |

| Learning Curve | ZenML offers a powerful abstraction set (stacks, orchestrators, artifact lineage) that pays off at scale but requires systems thinking. | Langfuse's core mental model (trace, spans, generations, scores, prompt versions) matches how LLM teams already debug and iterate. |

| Scalability | ZenML scales by delegating execution to production orchestrators and compute backends, enabling large-scale training/eval pipelines. | Langfuse is engineered for high-ingestion observability using ClickHouse (OLAP), Redis buffering, and a worker architecture built for scale. |

| Cost Model | ZenML's OSS is free; managed tiers are priced around pipeline-run volume with clear plan boundaries and enterprise self-hosting options. | Langfuse publishes transparent monthly SaaS tiers ($29–$2499/mo) plus usage-based units with a pricing calculator; self-host is also available. |

| Collaboration | ZenML Pro adds multi-user collaboration, workspaces/projects, and RBAC/SSO for teams operating shared ML platforms. | Langfuse is inherently team-oriented (shared traces, prompt releases, annotation queues) with enterprise SSO, RBAC, SCIM, and audit logs. |

| ML Frameworks | ZenML supports general ML/AI workflows (classical ML, deep learning, and LLM pipelines) with arbitrary Python steps and many tool integrations. | Langfuse is specialized for LLM applications; it integrates with LLM frameworks rather than covering the full training ecosystem. |

| Monitoring | ZenML provides pipeline/run tracking and can support production monitoring through integrated components and dashboards. | Monitoring is Langfuse's core: production-grade LLM tracing, token/cost tracking, evaluations, and analytics are first-class features. |

| Governance | ZenML Pro emphasizes enterprise controls like RBAC, workspaces/projects, and structured access management for ML operations. | Langfuse offers enterprise governance (SOC2/ISO reports, optional HIPAA BAA, audit logs, SCIM, SSO/RBAC) depending on plan and add-ons. |

| Experiment Tracking | ZenML tracks runs, artifacts, and metadata/lineage, and integrates with experiment trackers as part of the broader ML lifecycle. | Langfuse supports evaluation datasets and score tracking for LLM apps, but is not a general hyperparameter/ML experiment tracking system. |

| Reproducibility | ZenML is designed for reproducibility: pipelines produce versioned artifacts with lineage and (in Pro) snapshots for environment versioning. | Langfuse improves reproducibility at the prompt/trace level (prompt versioning linked to traces), but doesn't manage full pipeline environments or artifact stores. |

| Auto-Retraining | ZenML's pipeline layer is well-suited to scheduled or event-triggered retraining workflows and CI/CD automation patterns. | Langfuse provides evaluation signals and telemetry but does not orchestrate retraining or deployment automation on its own. |

Code comparison

from zenml import pipeline, step

@step

def load_data():

# Load and preprocess your data

...

return train_data, test_data

@step

def train_model(train_data):

# Train using ANY ML framework

...

return model

@step

def evaluate(model, test_data):

# Evaluate and log metrics

...

return metrics

@pipeline

def ml_pipeline():

train, test = load_data()

model = train_model(train)

evaluate(model, test)from langfuse import observe, get_client

from langfuse.openai import openai

langfuse = get_client()

@observe()

def solve(question: str) -> str:

prompt = langfuse.get_prompt("calculator")

# Instrumented OpenAI call; links prompt version to output

resp = openai.chat.completions.create(

model="gpt-4o",

messages=[

{"role": "system", "content": prompt.compile(base=10)},

{"role": "user", "content": question},

],

langfuse_prompt=prompt,

)

answer = resp.choices[0].message.content

# Attach an evaluation score to the current trace

langfuse.score_current_trace(name="is_correct", value=1)

return answer

print(solve("1 + 1 = ?"))

langfuse.flush()

ZenML is fully open-source and vendor-neutral, letting you avoid the significant licensing costs and platform lock-in of proprietary enterprise platforms. Your pipelines remain portable across any infrastructure, from local development to multi-cloud production.

ZenML offers a pip-installable, Python-first approach that lets you start locally and scale later. No enterprise deployment, platform operators, or Kubernetes clusters required to begin — build production-grade ML pipelines in minutes, not weeks.

ZenML's composable stack lets you choose your own orchestrator, experiment tracker, artifact store, and deployer. Swap components freely without re-platforming — your pipelines adapt to your toolchain, not the other way around.

Expand Your Knowledge

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

LangGraph keeps graph state, threads, and interrupts. Kitaru adds the durable workflow around the graph call — replay boundaries, durable waits, and inspectable runs.

The OpenAI Agents SDK stays the harness; Kitaru adds the runtime around it — durable workflow waits, replay boundaries, and inspectable execution history.