Don't make Claude do the same work twice

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

KServe is excellent at production model serving on Kubernetes — autoscaling, rollouts, and multi-framework runtimes. ZenML covers everything KServe doesn't: building reproducible pipelines, tracking artifacts and lineage, and delivering models to production with confidence. Use KServe for the endpoint, ZenML for the lifecycle that produces it. Together, they turn a model file into a governed, repeatable production system.

“ZenML has proven to be a critical asset in our machine learning toolbox, and we are excited to continue leveraging its capabilities to drive ADEO's machine learning initiatives to new heights”

François Serra

ML Engineer / ML Ops / ML Solution architect at ADEO Services

Feature-by-feature comparison

| Workflow Orchestration | Built around defining and running end-to-end ML/AI pipelines with steps, artifacts, and repeatable executions across environments | Orchestrates serving resources on Kubernetes, not training or evaluation workflows — no native pipeline or DAG system |

| Integration Flexibility | Composable stack with 50+ MLOps integrations — swap orchestrators, trackers, and deployers without code changes | Deep integration with K8s serving runtimes, but scoped to inference — doesn't integrate across the broader ML toolchain |

| Vendor Lock-In | Cloud-agnostic by design — stacks make it easy to switch infrastructure providers and tools as needs change | Open-source CNCF project not tied to any cloud — lock-in is to Kubernetes itself and optionally Knative/Istio for key features |

| Setup Complexity | pip install zenml — start locally and progressively adopt infrastructure via stack components without needing K8s on day one | Requires installing Kubernetes controllers, CRDs, and optionally Knative and networking dependencies for full feature set |

| Learning Curve | Python-first abstraction matches how ML engineers write training code, with infrastructure details pushed into configuration | Approachable for K8s-native teams but demands comfort with CRDs, cluster networking, and serving runtime concepts |

| Scalability | Delegates compute to scalable backends — Kubernetes, Spark, cloud ML services — for unlimited horizontal scaling | Designed for scalable multi-tenant inference with request-based autoscaling, scale-to-zero, and canary rollout patterns |

| Cost Model | Open-source core is free — pay only for your own infrastructure, with optional managed cloud for enterprise features | Apache-2.0 with no per-seat or per-request fees — costs are infrastructure and operations, with scale-to-zero reducing waste |

| Collaboration | Code-native collaboration through Git, CI/CD, and code review — ZenML Pro adds RBAC, workspaces, and team dashboards | Relies on Kubernetes-native collaboration (GitOps, cluster RBAC) — no ML-specific collaboration layer for experiments or artifacts |

| ML Frameworks | Use any Python ML framework — TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM — with native materializers and tracking | Multi-framework serving support (TensorFlow, PyTorch, scikit-learn, XGBoost, ONNX) plus growing GenAI/LLM runtimes |

| Monitoring | Integrates Evidently, WhyLogs, and other monitoring tools as stack components for automated drift detection and alerting | Serving-time metrics via Prometheus, payload logging, and drift/outlier detection integrations — scoped to inference endpoints |

| Governance | ZenML Pro provides RBAC, SSO, workspaces, and audit trails — self-hosted option keeps all data in your own infrastructure | Governance inherited from Kubernetes (RBAC, namespaces) — ML-specific governance like training-to-deploy lineage is out of scope |

| Experiment Tracking | Native metadata tracking plus seamless integration with MLflow, Weights & Biases, Neptune, and Comet for rich experiment comparison | Does not track experiments — serves whatever model artifact is provided and exposes runtime-level status and metrics |

| Reproducibility | Automatic artifact versioning, code-to-Git linking, and containerized execution guarantee reproducible pipeline runs | Serving configs are reproducible via K8s manifests, but end-to-end reproducibility of training data, code, and environments is out of scope |

| Auto-Retraining | Schedule pipelines via any orchestrator or use ZenML Pro event triggers for drift-based automated retraining workflows | Can roll out new model versions once produced, but does not automate retraining or the upstream triggers that decide when to retrain |

Code comparison

from zenml import pipeline, step, Model

from zenml.integrations.mlflow.steps import (

mlflow_model_deployer_step,

)

import pandas as pd

from sklearn.ensemble import RandomForestRegressor

from sklearn.metrics import mean_squared_error

import numpy as np

@step

def ingest_data() -> pd.DataFrame:

return pd.read_csv("data/dataset.csv")

@step

def train_model(df: pd.DataFrame) -> RandomForestRegressor:

X, y = df.drop("target", axis=1), df["target"]

model = RandomForestRegressor(n_estimators=100)

model.fit(X, y)

return model

@step

def evaluate(model: RandomForestRegressor, df: pd.DataFrame) -> float:

X, y = df.drop("target", axis=1), df["target"]

preds = model.predict(X)

return float(np.sqrt(mean_squared_error(y, preds)))

@step

def check_drift(df: pd.DataFrame) -> bool:

# Plug in Evidently, Great Expectations, etc.

return detect_drift(df)

@pipeline(model=Model(name="my_model"))

def ml_pipeline():

df = ingest_data()

model = train_model(df)

rmse = evaluate(model, df)

drift = check_drift(df)

# Runs on any orchestrator, logs to MLflow,

# tracks artifacts, and triggers retraining — all

# in one portable, version-controlled pipeline

ml_pipeline()import asyncio

from kubernetes import client

from kserve import (

KServeClient, constants,

V1beta1InferenceService, V1beta1InferenceServiceSpec,

V1beta1PredictorSpec, V1beta1SKLearnSpec,

RESTConfig, InferenceRESTClient,

)

async def main():

name, namespace = "sklearn-iris", "kserve-test"

isvc = V1beta1InferenceService(

api_version=constants.KSERVE_V1BETA1,

kind=constants.KSERVE_KIND,

metadata=client.V1ObjectMeta(

name=name, namespace=namespace

),

spec=V1beta1InferenceServiceSpec(

predictor=V1beta1PredictorSpec(

sklearn=V1beta1SKLearnSpec(

storage_uri="s3://my-bucket/iris/model.joblib"

)

)

),

)

ksc = KServeClient()

ksc.create(isvc)

ksc.wait_isvc_ready(name, namespace=namespace)

rest = InferenceRESTClient(

RESTConfig(protocol="v1", retries=5, timeout=30)

)

result = await rest.infer(

f"http://{name}.{namespace}",

{"instances": [[6.8, 2.8, 4.8, 1.4]]},

model_name=name,

)

print(result)

asyncio.run(main())

# Deploys and serves a model on Kubernetes.

# No pipeline orchestration, experiment tracking,

# artifact lineage, or retraining automation.

# Focused purely on the inference endpoint.

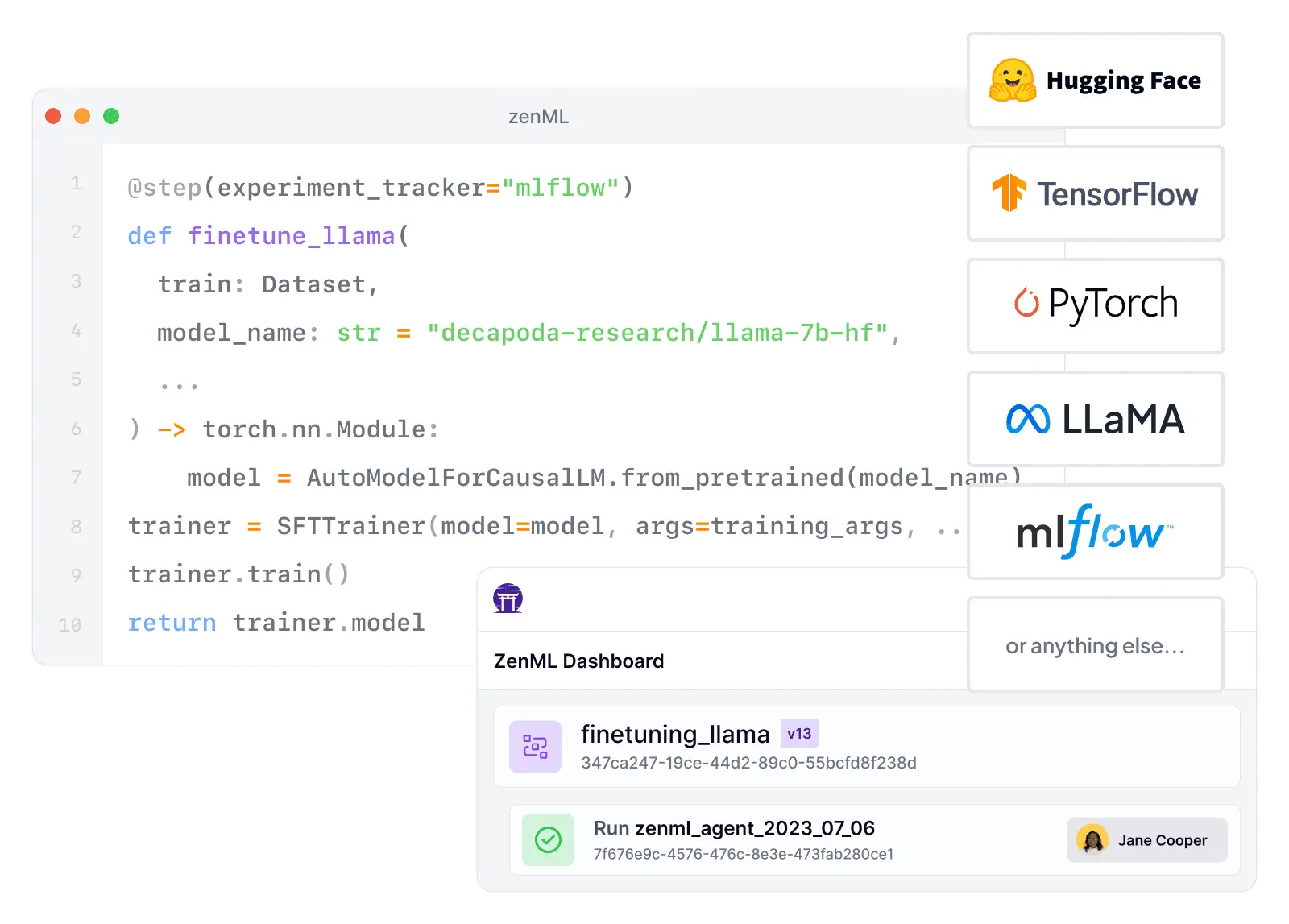

ZenML is fully open-source and vendor-neutral, letting you avoid the significant licensing costs and platform lock-in of proprietary enterprise platforms. Your pipelines remain portable across any infrastructure, from local development to multi-cloud production.

ZenML offers a pip-installable, Python-first approach that lets you start locally and scale later. No enterprise deployment, platform operators, or Kubernetes clusters required to begin — build production-grade ML pipelines in minutes, not weeks.

ZenML's composable stack lets you choose your own orchestrator, experiment tracker, artifact store, and deployer. Swap components freely without re-platforming — your pipelines adapt to your toolchain, not the other way around.

Expand Your Knowledge

Claude Agent SDK runs the agent loop. Kitaru adds the durable runtime around a completed invocation — checkpointed results, artifacts, replay boundaries, and waits.

LangGraph keeps graph state, threads, and interrupts. Kitaru adds the durable workflow around the graph call — replay boundaries, durable waits, and inspectable runs.

The OpenAI Agents SDK stays the harness; Kitaru adds the runtime around it — durable workflow waits, replay boundaries, and inspectable execution history.