KAI Scheduler vs Run:ai: Which GPU Scheduling Tool Fits Your AI Infrastructure?

We break down GPU scheduling, fractional GPU allocation, gang scheduling, integrations, and pricing to help you pick the right tool for your AI infrastructure.

17 posts with this tag

We break down GPU scheduling, fractional GPU allocation, gang scheduling, integrations, and pricing to help you pick the right tool for your AI infrastructure.

In this Run:ai vs ClearML comparison, we break down GPU orchestration, workload scheduling, resource policies, RBAC, integrations, and pricing to help you pick the right platform for your AI infrastructure.

In this E2B vs Daytona guide, you will learn about how these two compare across sandbox lifecycle management, output handling, pricing, and more.

In this article, you learn about the best E2B alternatives to deploy AI sandboxes. We break down 10 options covering isolation, execution, pricing, and real-world agent workloads.

ZenML's new pipeline deployments feature lets you use the same pipeline syntax to run both batch ML training jobs and deploy real-time AI agents or inference APIs, with seamless local-to-cloud deployment via a unified deployer stack component.

In this Agno vs LangGraph, we explain the difference between the two and conclude which one is the best to build multi-agent systems.

In this Outerbounds pricing guide, we break down the costs, features, and value to help you decide if it’s the right investment for your business.

In this Prefect pricing guide, we break down the costs, features, and value to help you decide if it’s the right investment for your business.

ZenML 0.71.0 features the Modal Step Operator for fast, configurable cloud execution, dynamic artifact naming, and enhanced visualizations. It improves API token management, dashboard usability, and infrastructure stability while fixing key bugs. Expanded documentation supports advanced workflows and big data management.

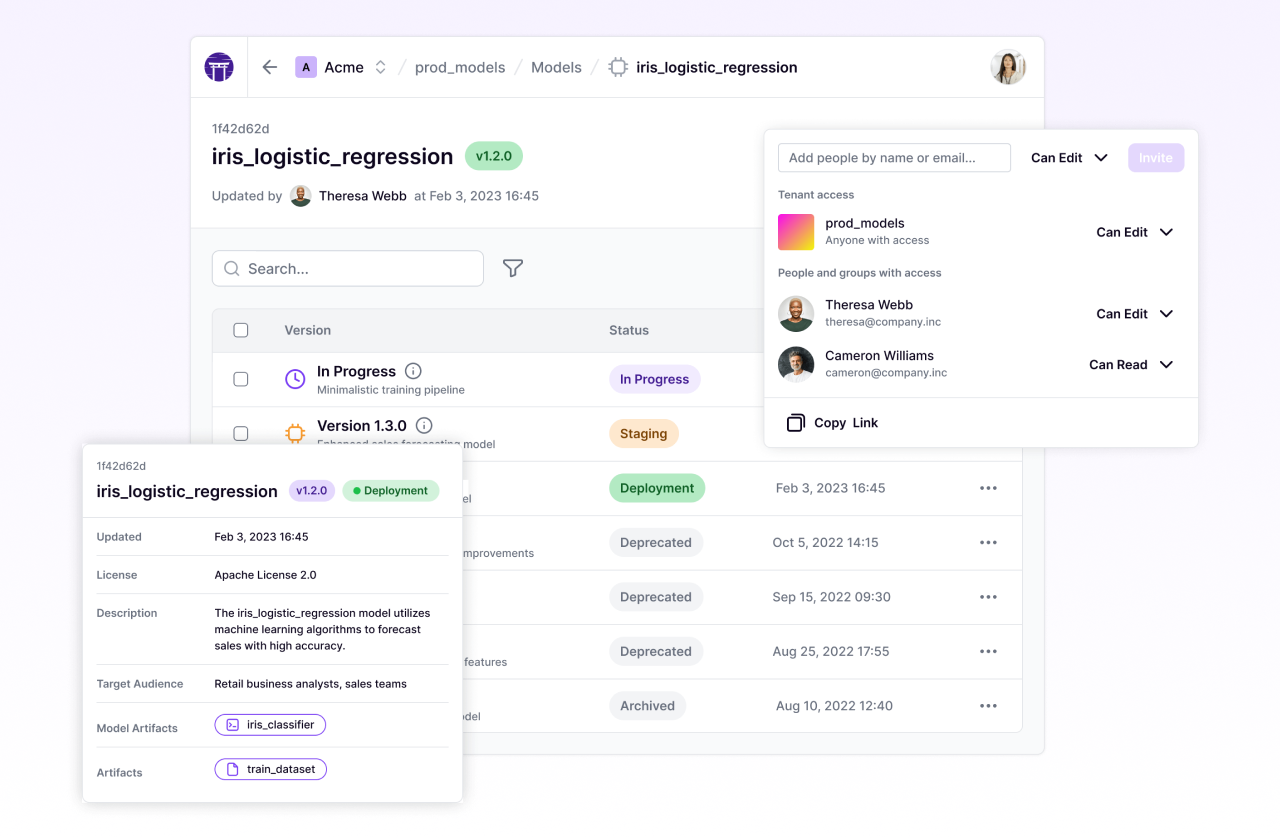

ZenML 0.68.0 introduces several major enhancements including the return of stack components visualization on the dashboard, powerful client-side caching for improved performance, and a streamlined onboarding process that unifies starter and production setups. The release also brings improved artifact management with the new `register_artifact` function, enhanced BentoML integration (v1.3.5), and comprehensive documentation updates, while deprecating legacy features including Python 3.8 support.

Why use ZenML alongside AWS / GCP / Azure MLOps platforms? Let's dive into why ZenML complements and enhance existing cloud MLOps infrastructure.

The combination of ZenML and Neptune can streamline machine learning workflows and provide unprecedented visibility into experiments. ZenML is an extensible framework for creating production-ready pipelines, while Neptune is a metadata store for MLOps. When combined, these tools offer a robust solution for managing the entire ML lifecycle, from experimentation to production. The combination of these tools can significantly accelerate the development process, especially when working with complex tasks like language model fine-tuning. This integration offers the ability to focus more on innovating and less on managing the intricacies of your ML pipelines.

This release incorporates updates to the SageMaker Orchestrator, DAG Visualizer, and environment variable handling. It also includes Kubernetes support for Skypilot and an updated Deepchecks integration. Various other improvements and bug fixes have been implemented across different areas of the platform.

ZenML secures an additional $3.7M in funding led by Point Nine, bringing its total Seed Round to $6.4M, to further its mission of simplifying MLOps. The startup is set to launch ZenML Cloud, a managed service with advanced features, while continuing to expand its open-source framework.

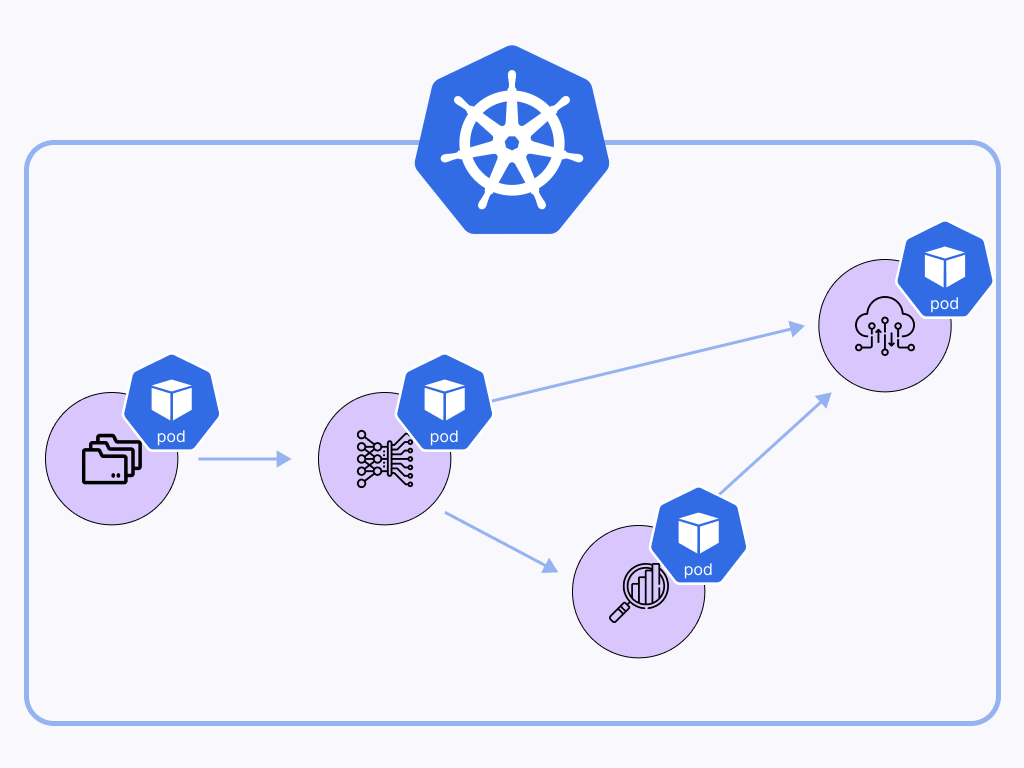

Getting started with distributed ML in the cloud: How to orchestrate ML workflows natively on Amazon Elastic Kubernetes Service (EKS).

How ZenML lets you have the best of both worlds, serverless managed infrastructure without the vendor lock in.

With ZenML 0.6.3, you can now run your ZenML steps on Sagemaker, Vertex AI, and AzureML! It’s normal to have certain steps that require specific infrastructure (e.g. a GPU-enabled environment) on which to run model training, and Step Operators give you the power to switch out infrastructure for individual steps to support this.