Databricks vs SageMaker vs ZenML: Pick Your Platform, Keep Your Pipelines Portable

This article compares Databricks vs Sagemaker vs ZenML on orchestration, features, GenAI, integrations, and pricing for ML platform teams.

115 posts with this tag

This article compares Databricks vs Sagemaker vs ZenML on orchestration, features, GenAI, integrations, and pricing for ML platform teams.

Compare Dataiku vs Databricks vs ZenML across workflow orchestration, visualization, experiment tracking, governance, integrations, and pricing to choose the right ML platform.

We break down GPU scheduling, fractional GPU allocation, gang scheduling, integrations, and pricing to help you pick the right tool for your AI infrastructure.

In this Run:ai vs ClearML comparison, we break down GPU orchestration, workload scheduling, resource policies, RBAC, integrations, and pricing to help you pick the right platform for your AI infrastructure.

ML pipelines were DAGs. Agents are loops. The orchestration layer that worked for training jobs doesn't work for autonomous systems, and the industry is scrambling to catch up.

In this article, you will learn about the best Comet alternatives for model evaluation.

Compare LangSmith, MLflow, and ZenML across pipeline orchestration, reproducibility, deployment, and pricing to choose the right production AI tool.

In this article, you learn about the best DVC alternatives that help you manage large datasets for your ML projects.

This Kubeflow vs SageMaker vs ZenML article helps you choose the framework best for batch and pipeline-driven ML systems.

ZenML's new Quick Wins skill for Claude Code automatically audits your ML pipelines and implements 15 best-practice improvements (from metadata logging to Model Control Plane setup) based on what's actually missing in your codebase.

ML pipeline scheduling hides complexity beneath simple cron syntax—lessons on freshness, monitoring gaps, and overrun policies from Twitter, LinkedIn, and Shopify.

In this MLflow vs SageMaker vs ZenML article, we compare their experiment tracking, model registry, evaluation, integration, and more such capabilities.

In this ClearML vs MLflow vs ZenML article, we compare the three MLOps frameworks and conclude which one is best suited for you.

In this Prefect vs Temporal vs ZenML article, we compare the three to see which one is the best for data and ML teams.

This Databricks vs Snowflake guide will compare both platforms, so you know which one fits your criteria as the right data intelligence platform.

In this article, you learn about the best n8n alternatives for workflow automation.

In this article, you will learn about the best ClearML alternatives for experiment tracking and building ML pipelines.

An Airflow vs Kubeflow vs ZenML guide that does a feature-by-feature comparison.

In this Slurm vs Kubernetes comparison guide, we compare their primary workflows, control planes, resource models, and scheduling policies.

In this article, you learn about the best Temporal alternatives for ML and data teams.

In this Neptune AI vs WandB vs ZenML, we compare these platforms’ features, integrations, and pricing.

In this Neptune AI vs MLflow vs ZenML article, we explain the difference between the three platforms by comparing their features, integrations, and pricing.

In this article, you will learn about the best Neptune AI alternatives to help you track your ML experiments better.

In this Temporal vs Airflow comparison, we break down the key differences in architecture, features, and use cases to help you decide which tool belongs in your stack.

Neptune AI is terminating its standalone SaaS solution. Switch to ZenML to track ML experiments and do much more.

In this article, you learn about the best Datadog alternatives you can use for full-stack observability.

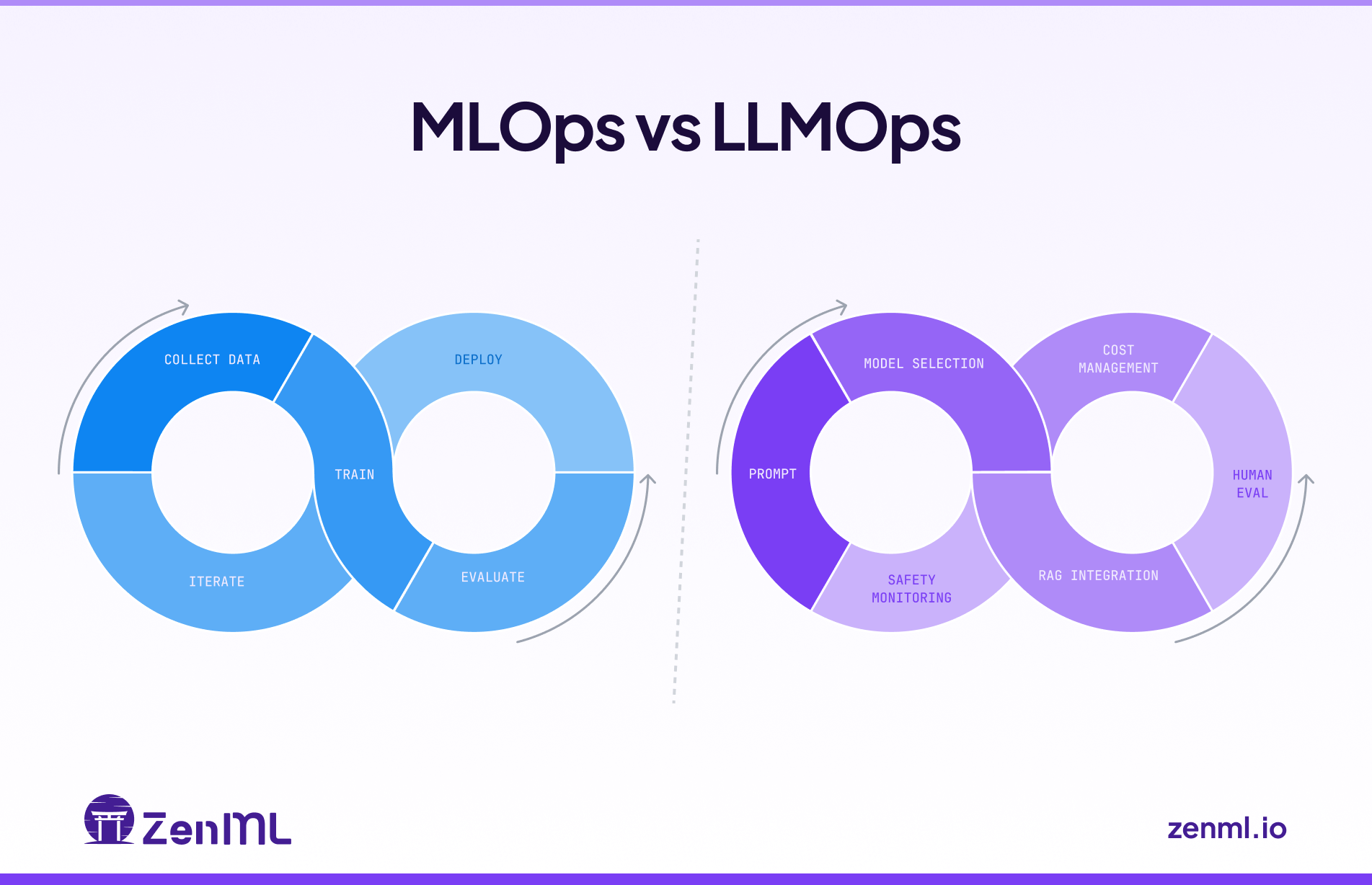

In this guide, we showcase the differences between MLOps and LLMOps and explain how to use them in tandem.

ZenML's Pipeline Deployments transform pipelines into persistent HTTP services with warm state, instant rollbacks, and full observability—unifying real-time AI agents and classical ML models under one production-ready abstraction.

Discover the 10 best data vector databases for RAG pipelines.

In this Smolagents vs LangGraph, we explain the difference between the two and conclude which one is the best to build AI agents.

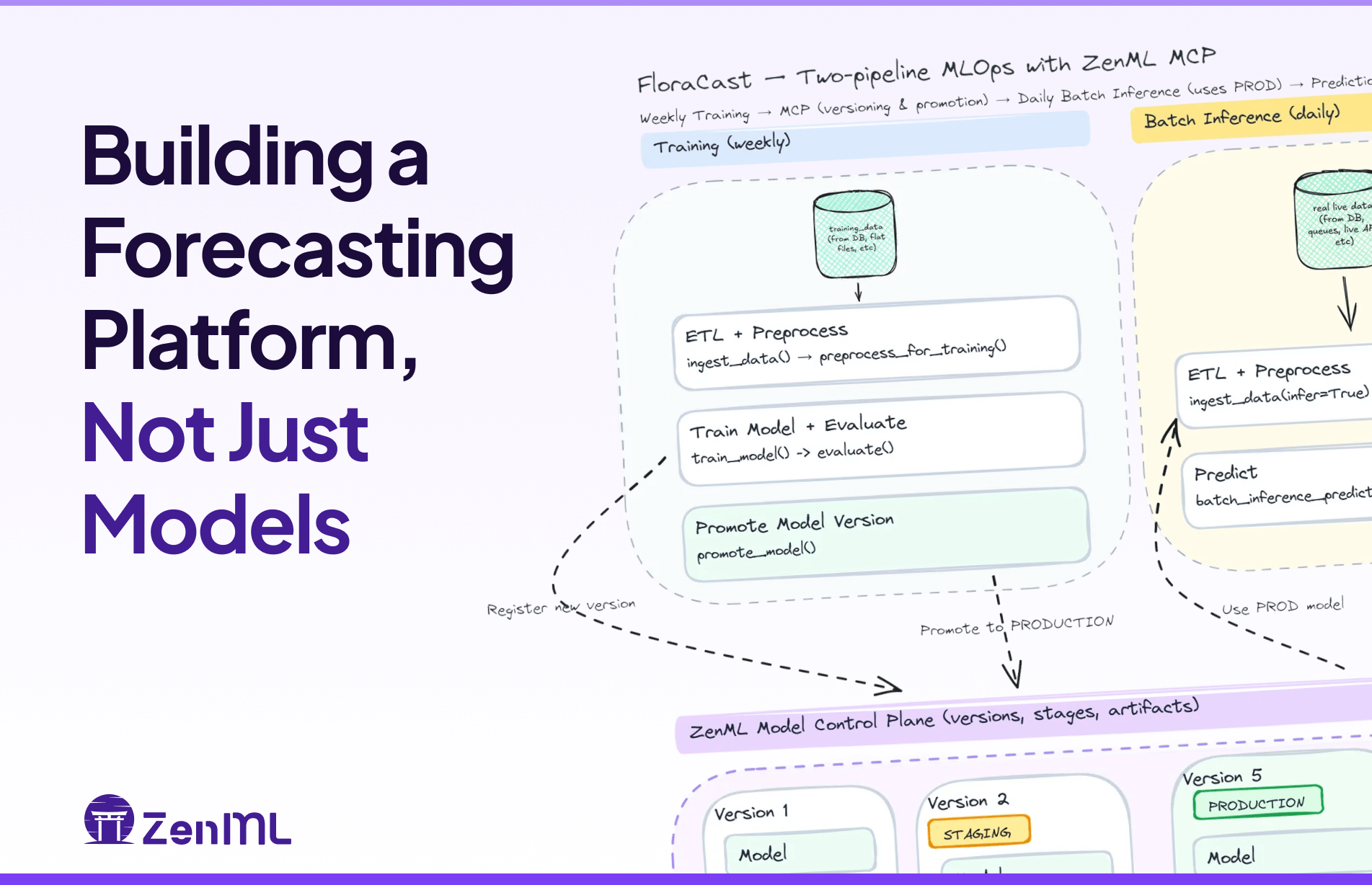

FloraCast is a production-ready template that shows how to build a forecasting platform—config-driven experiments, model versioning/staging, batch inference, and scheduled retrains—with ZenML and Darts.

Discover the best Kedro alternatives to build production-grade data science pipelines.

Discover the top 8 LangGraph alternatives for scalable agent orchestration.

In this ClearML pricing breakdown, we discuss the costs, features, and value ClearML provides to help you decide if it’s the right investment for your business.

In this Prefect vs Airflow vs ZenML article, we explain the difference between the three platforms and educate you about using them in tandem.

In this WandB pricing guide, we break down the costs, features, and value to help you decide if it’s the right investment for your business.

In this Flyte vs Airflow vs ZenML article, we explain the difference between the three platforms and educate you about using them in tandem.

In this Metaflow vs MLflow vs ZenML article, we explain the difference between the three platforms and educate you about using them in tandem.

In this Outerbounds pricing guide, we break down the costs, features, and value to help you decide if it’s the right investment for your business.

Discover the top 8 Metaflow alternatives to streamline your ML workflows.

In this Prefect pricing guide, we break down the costs, features, and value to help you decide if it’s the right investment for your business.

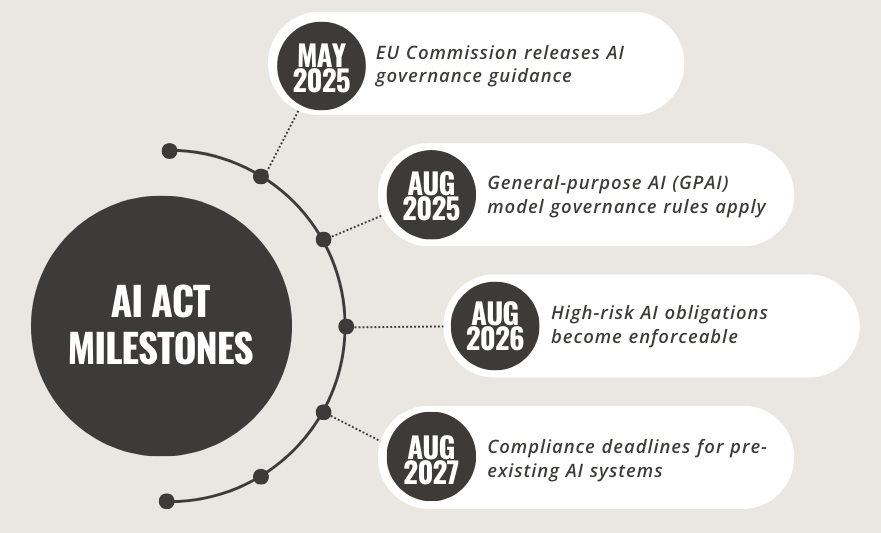

Manual EU AI Act compliance is unmanageable. This credit scoring pipeline shows how ZenML transforms regulatory requirements into automated workflows—from bias detection and risk assessment to human oversight gates and Annex IV documentation.

Traditional banks face growing pressure to deploy machine learning rapidly while meeting strict regulatory requirements. This blog post explores how modern MLOps practices, like automated data lineage, validation testing, and model observability can help financial institutions bridge the gap. Featuring real-world insights from NatWest and an open-source ZenML pipeline, it offers a practical roadmap for compliant, scalable AI deployment.

Future-proof your ML operations by building portable pipelines that work across multiple platforms instead of forcing standardization on a single solution.

In this MLflow vs Weights & Biases vs ZenML article, we explain the difference between the three platforms and educate you about using them in tandem too.

Discover the best MLflow alternatives designed to improve all your ML operations.

An in-depth analysis of retail MLOps challenges, covering data complexity, edge computing, seasonality, and multi-cloud deployment, with real-world examples from major retailers like Wayfair and Starbucks, and practical solutions including ZenML's impact in reducing deployment time from 8.5 to 2 weeks at Adeo Leroy Merlin.

In this Kubeflow vs MLflow vs ZenML article, we explain the difference between the three platforms by comparing their features, integrations, and pricing.

Explores how energy companies can leverage ZenML's MLOps framework to meet Ofgem's regulatory requirements for AI systems, ensuring fairness, transparency, accountability, and security while maintaining innovation in the rapidly evolving energy sector.

Enterprises struggle with ML model management across multiple AWS accounts (development, staging, and production), which creates operational bottlenecks despite providing security benefits. This post dives into ten critical MLOps challenges in multi-account AWS environments, including complex pipeline languages, lack of centralized visibility, and configuration management issues. Learn how organizations can leverage ZenML's solutions to achieve faster, more reliable model deployment across Dev, QA, and Prod environments while maintaining security and compliance requirements.

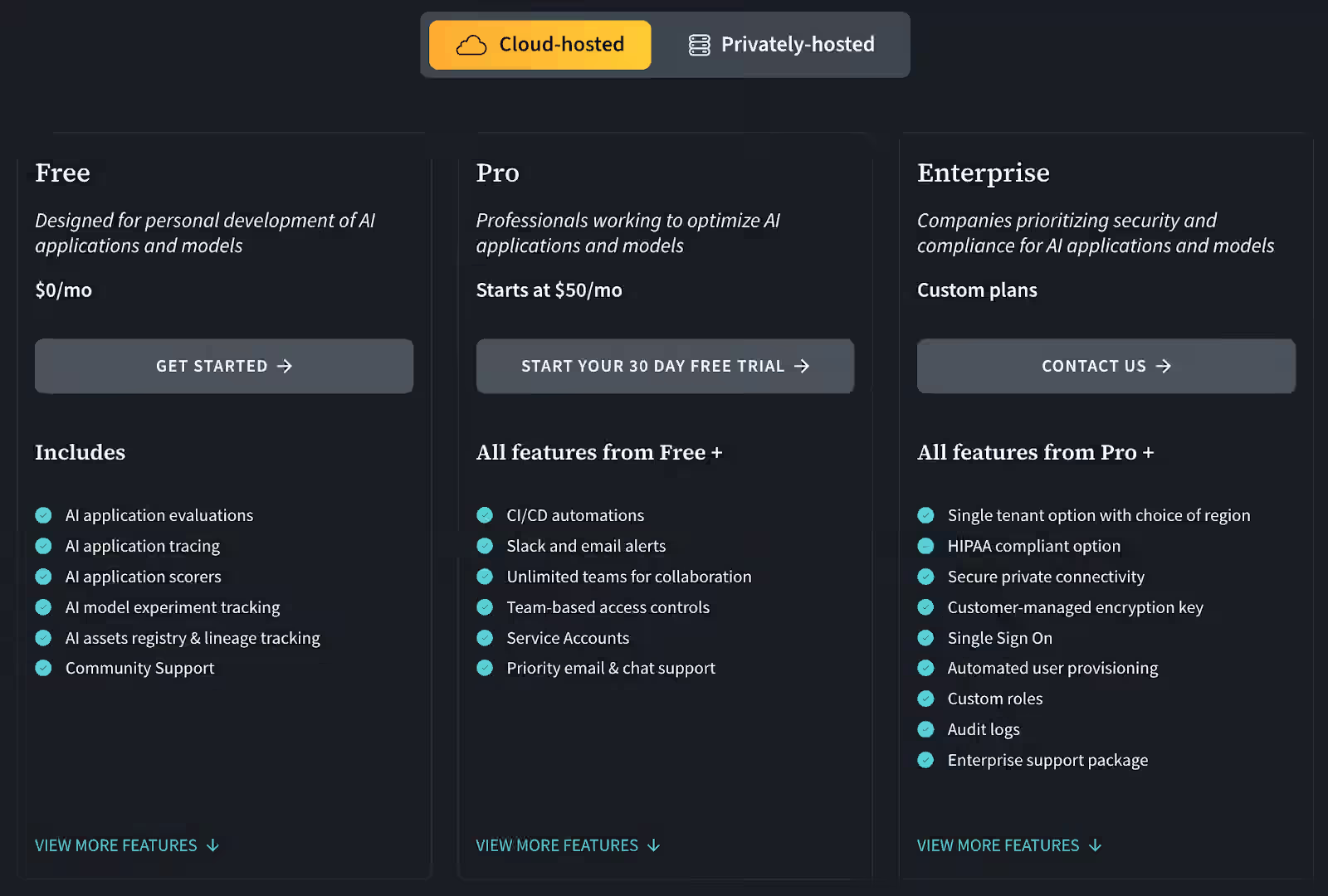

Learn when to upgrade from open-source ZenML to Pro features with our subway-map guide to scaling ML operations for growing teams, from solo experiments to enterprise collaboration.

Discover how ZenML's Service Connectors solve one of MLOps' most frustrating challenges: credential management. This deep dive explores how Service Connectors eliminate security risks and save engineer time by providing a unified authentication layer across cloud providers (AWS, GCP, Azure). Learn how this approach improves developer experience with reduced boilerplate, enforces security best practices with short-lived tokens, and enables true multi-cloud ML workflows without credential headaches. Compare ZenML's solution with alternatives from Kubeflow, Airflow, and cloud-native platforms to understand why proper credential abstraction is the unsung hero of efficient MLOps.

8 practical alternatives to Kubeflow that address its common challenges of complexity and operational overhead. From Argo Workflows' lightweight Kubernetes approach to ZenML's developer-friendly experience, we analyze each tool's strengths across infrastructure needs, developer experience, and ML-specific capabilities—helping you find the right orchestration solution that removes barriers rather than creating them for your ML workflows.

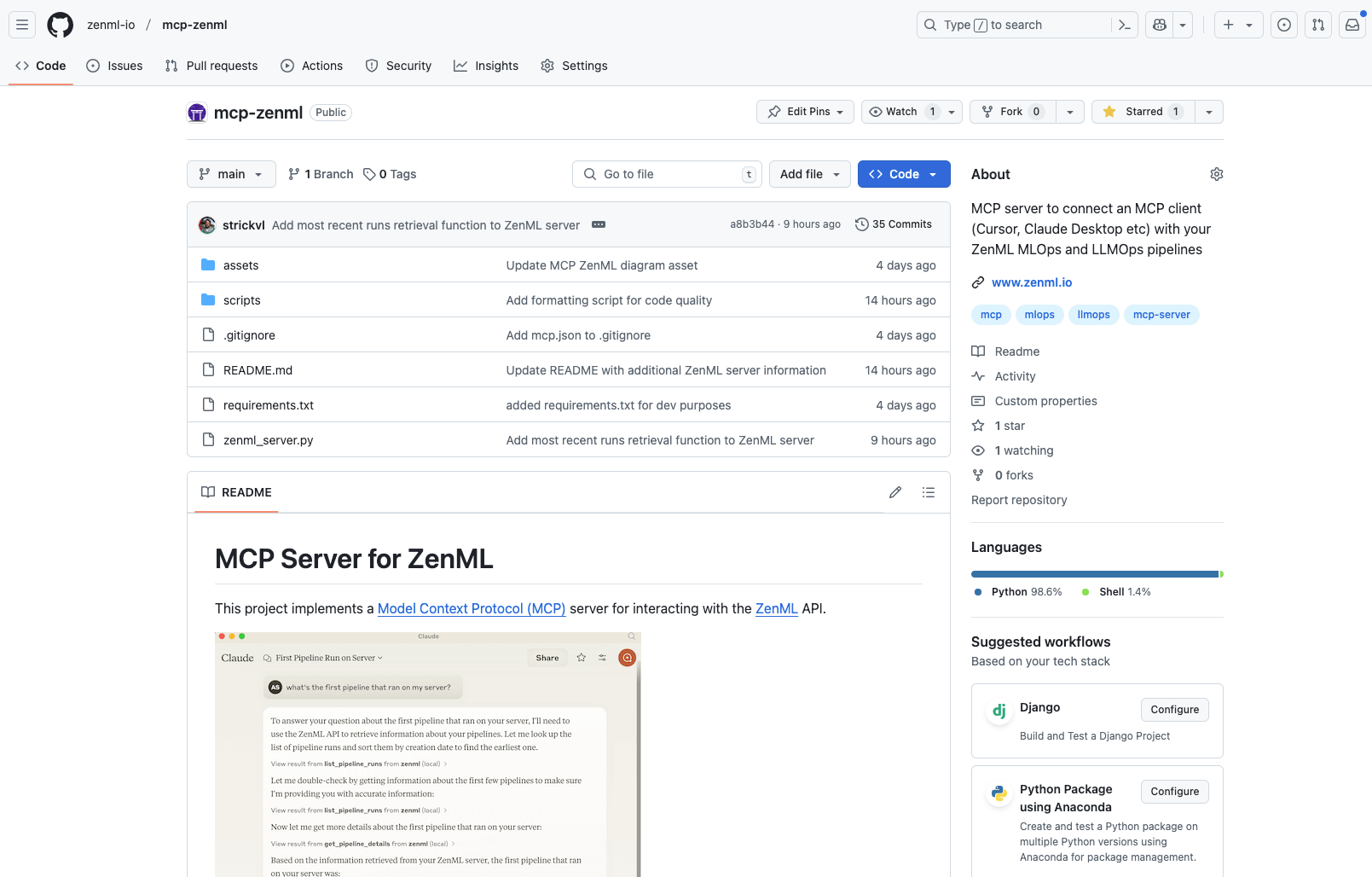

Discover the new ZenML MCP Server that brings conversational AI to ML pipelines. Learn how this implementation of the Model Context Protocol allows natural language interaction with your infrastructure, enabling query capabilities, pipeline analytics, and run management through simple conversation. Explore current features, engineering decisions, and future roadmap for this timely addition to the rapidly evolving MCP ecosystem.

The EU AI Act, now partially in effect as of February 2025, introduces comprehensive regulations for artificial intelligence systems with significant implications for global AI development. This landmark legislation categorizes AI systems based on risk levels - from prohibited applications to high-risk and limited-risk systems - establishing strict requirements for transparency, accountability, and compliance. The Act imposes substantial penalties for violations, up to €35 million or 7% of global turnover, and provides a clear timeline for implementation through 2027. Organizations must take immediate action to audit their AI systems, implement robust governance infrastructure, and enhance development practices to ensure compliance, with tools like ZenML offering technical solutions for meeting these regulatory requirements.

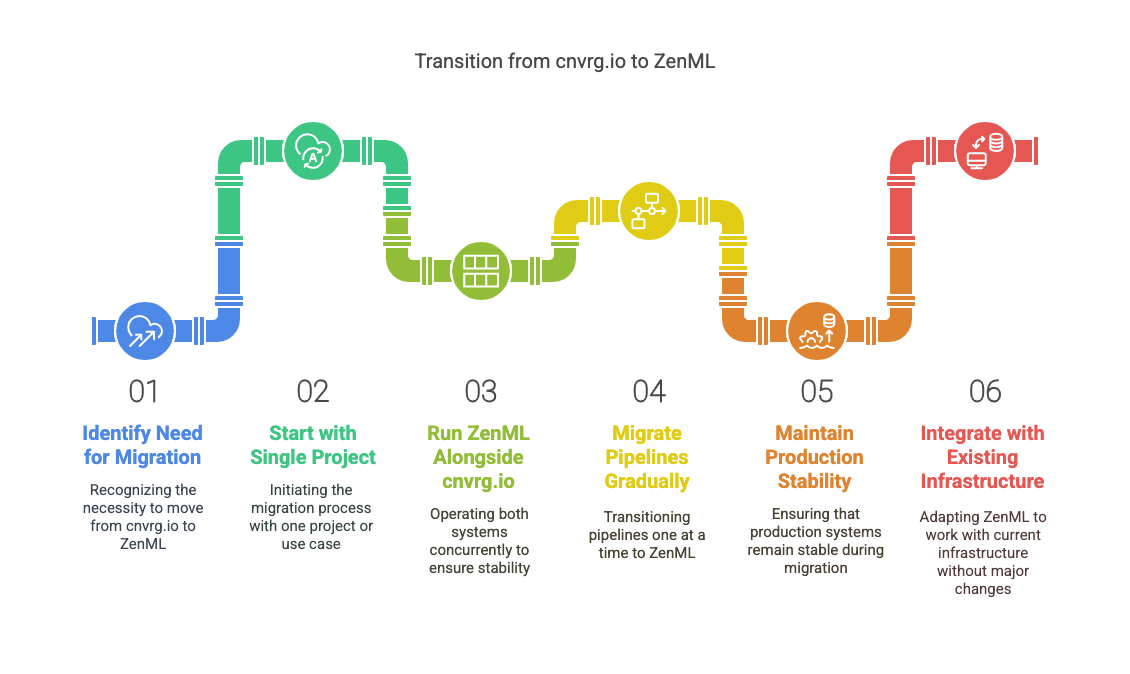

Learn how to migrate from cnvrg.io to ZenML's open-source MLOps framework. Discover a sustainable alternative before Intel Tiber AI Studio's 2025 end-of-life. Get started with your MLOps transition today.

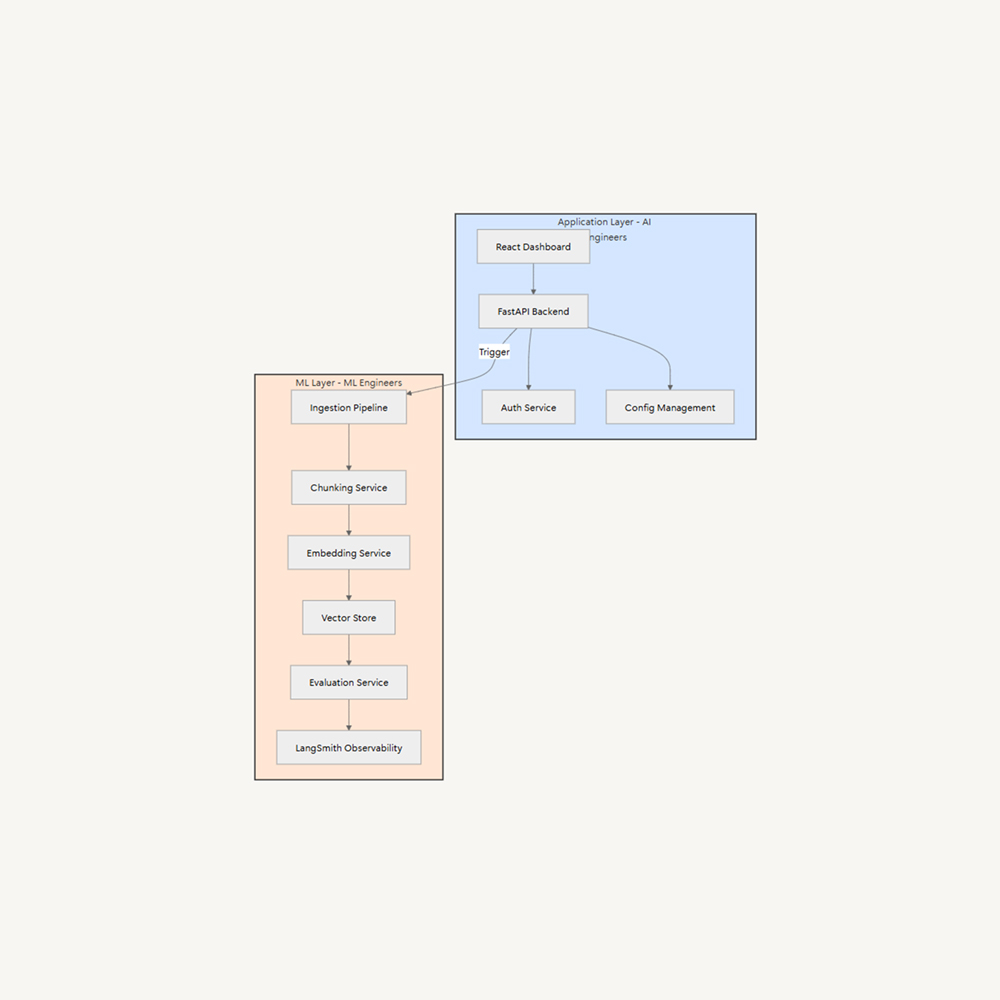

The rise of Generative AI has shifted the roles of AI Engineering and ML Engineering, with AI Engineers integrating generative AI into software products. This shift requires clear ownership boundaries and specialized expertise. A proposed solution is layer separation, separating concerns into two distinct layers: Application (AI Engineers/Software Engineers), Frontend development, Backend APIs, Business logic, User experience, and ML (ML Engineers). This allows AI Engineers to focus on user experience while ML Engineers optimize AI systems.

The LLMOps Database offers a curated collection of 300+ real-world generative AI implementations, providing technical teams with practical insights into successful LLM deployments. This searchable resource includes detailed case studies, architectural decisions, and AI-generated summaries of technical presentations to help bridge the gap between demos and production systems.

As organizations rush to adopt generative AI, several major tech companies have proposed maturity models to guide this journey. While these frameworks offer useful vocabulary for discussing organizational progress, they should be viewed as descriptive rather than prescriptive guides. Rather than rigidly following these models, organizations are better served by focusing on solving real problems while maintaining strong engineering practices, building on proven DevOps and MLOps principles while adapting to the unique challenges of GenAI implementation.

Unlock the potential of your ML infrastructure by breaking free from orchestration tool lock-in. This comprehensive guide explores proven strategies for building flexible MLOps architectures that adapt to your organization's evolving needs. Learn how to maintain operational efficiency while supporting multiple orchestrators, implement robust security measures, and create standardized pipeline definitions that work across different platforms. Perfect for ML engineers and architects looking to future-proof their MLOps infrastructure without sacrificing performance or compliance.

Discover how organizations can transform their machine learning operations from manual, time-consuming processes into streamlined, automated workflows. This comprehensive guide explores common challenges in scaling MLOps, including infrastructure management, model deployment, and monitoring across different modalities. Learn practical strategies for implementing reproducible workflows, infrastructure abstraction, and comprehensive observability while maintaining security and compliance. Whether you're dealing with growing pains in ML operations or planning for future scale, this article provides actionable insights for building a robust, future-proof MLOps foundation.

Discover why cognitive load is the hidden barrier to ML success and how infrastructure abstraction can revolutionize your data science team's productivity. This comprehensive guide explores the real costs of infrastructure complexity in MLOps, from security challenges to the pitfalls of home-grown solutions. Learn practical strategies for creating effective abstractions that let data scientists focus on what they do best – building better models – while maintaining robust security and control. Perfect for ML leaders and architects looking to scale their machine learning initiatives efficiently.

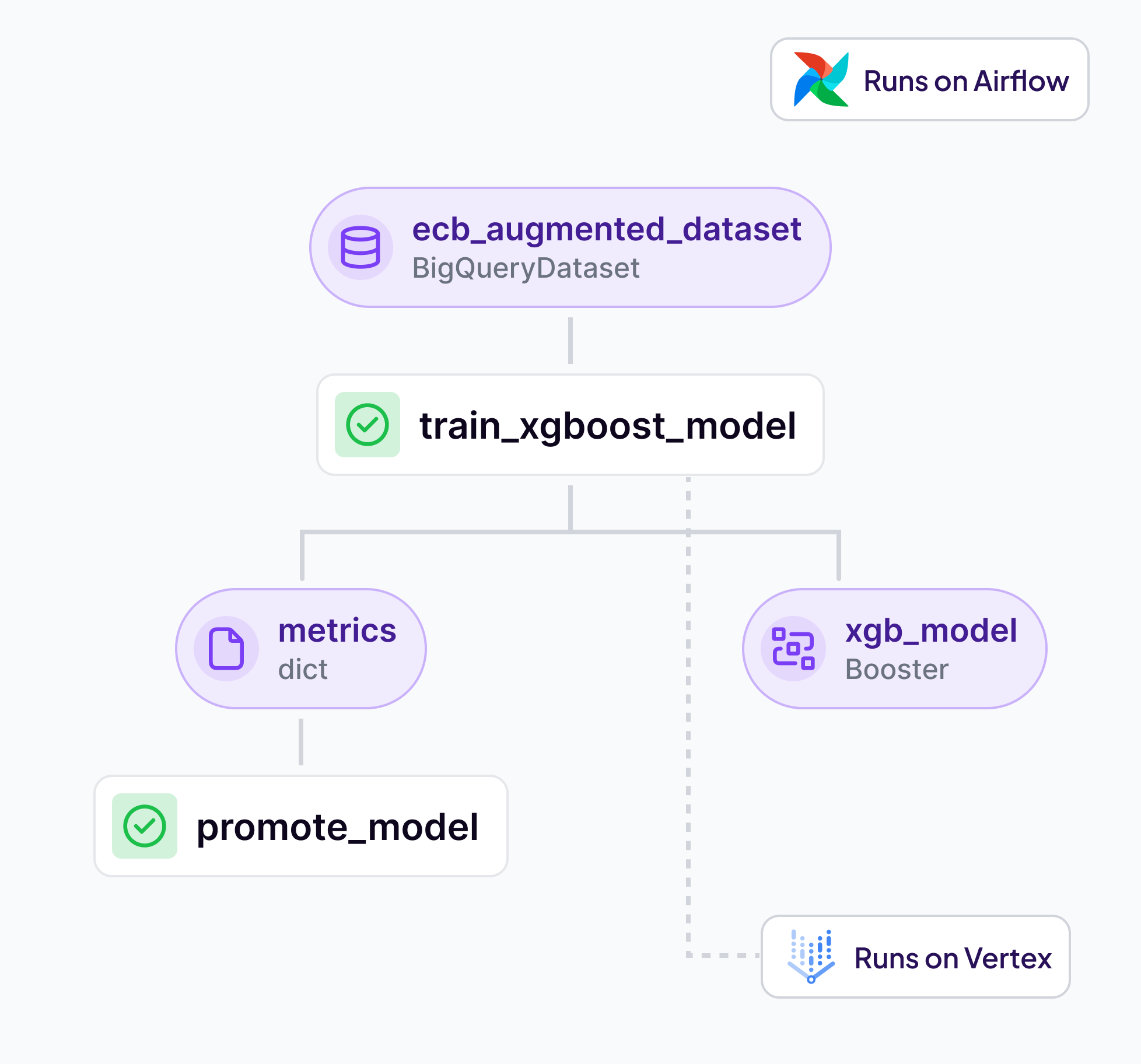

ZenML 0.70.0 has launched with major improvements but requires careful handling during upgrade due to significant database schema changes. Key highlights include enhanced artifact versioning with batch processing capabilities, improved scalability through reduced server requests, unified metadata management via the new log_metadata method, and flexible filtering with the new oneof operator. The release also features expanded documentation covering finetuning and LLM/ML engineering resources. Due to the database changes, users must back up their data and test the upgrade in a non-production environment before deploying to production systems.

Machine Learning (ML) adoption is gaining momentum, but challenges include robust pipelines, quality issues, and scale monitoring. Recognizing and overcoming these challenges is crucial.

Why use ZenML alongside AWS / GCP / Azure MLOps platforms? Let's dive into why ZenML complements and enhance existing cloud MLOps infrastructure.

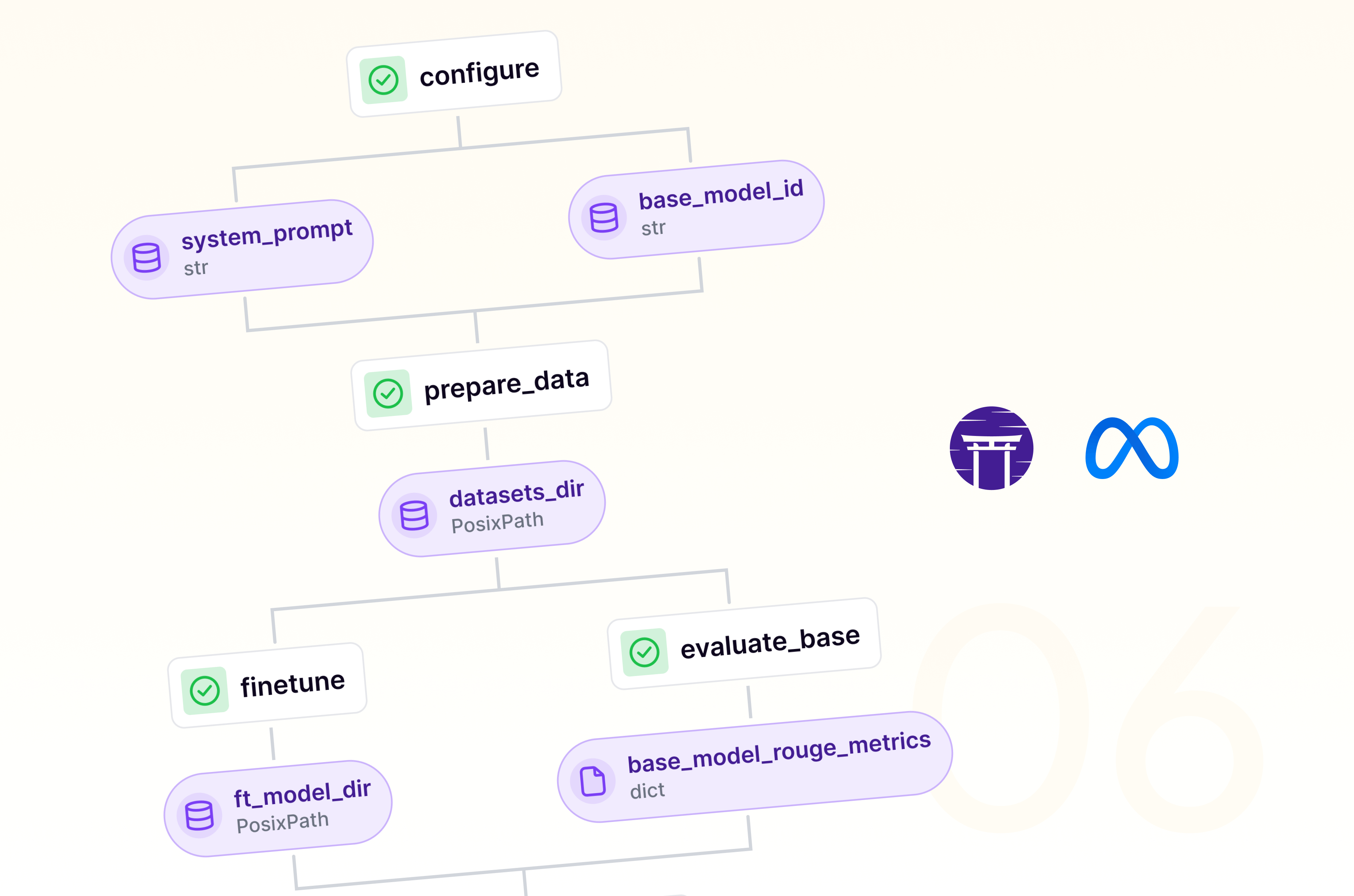

The combination of ZenML and Neptune can streamline machine learning workflows and provide unprecedented visibility into experiments. ZenML is an extensible framework for creating production-ready pipelines, while Neptune is a metadata store for MLOps. When combined, these tools offer a robust solution for managing the entire ML lifecycle, from experimentation to production. The combination of these tools can significantly accelerate the development process, especially when working with complex tasks like language model fine-tuning. This integration offers the ability to focus more on innovating and less on managing the intricacies of your ML pipelines.

This blog post discusses the integration of ZenML and Comet, an open-source machine learning pipeline management platform, to enhance the experimentation process. ZenML is an extensible framework for creating portable, production-ready pipelines, while Comet is a platform for tracking, comparing, explaining, and optimizing experiments and models. The combination offers seamless experiment tracking, enhanced visibility, simplified workflow, improved collaboration, and flexible configuration. The process involves installing ZenML and enabling Comet integration, registering the Comet experiment tracker in the ZenML stack, and customizing experiment settings.

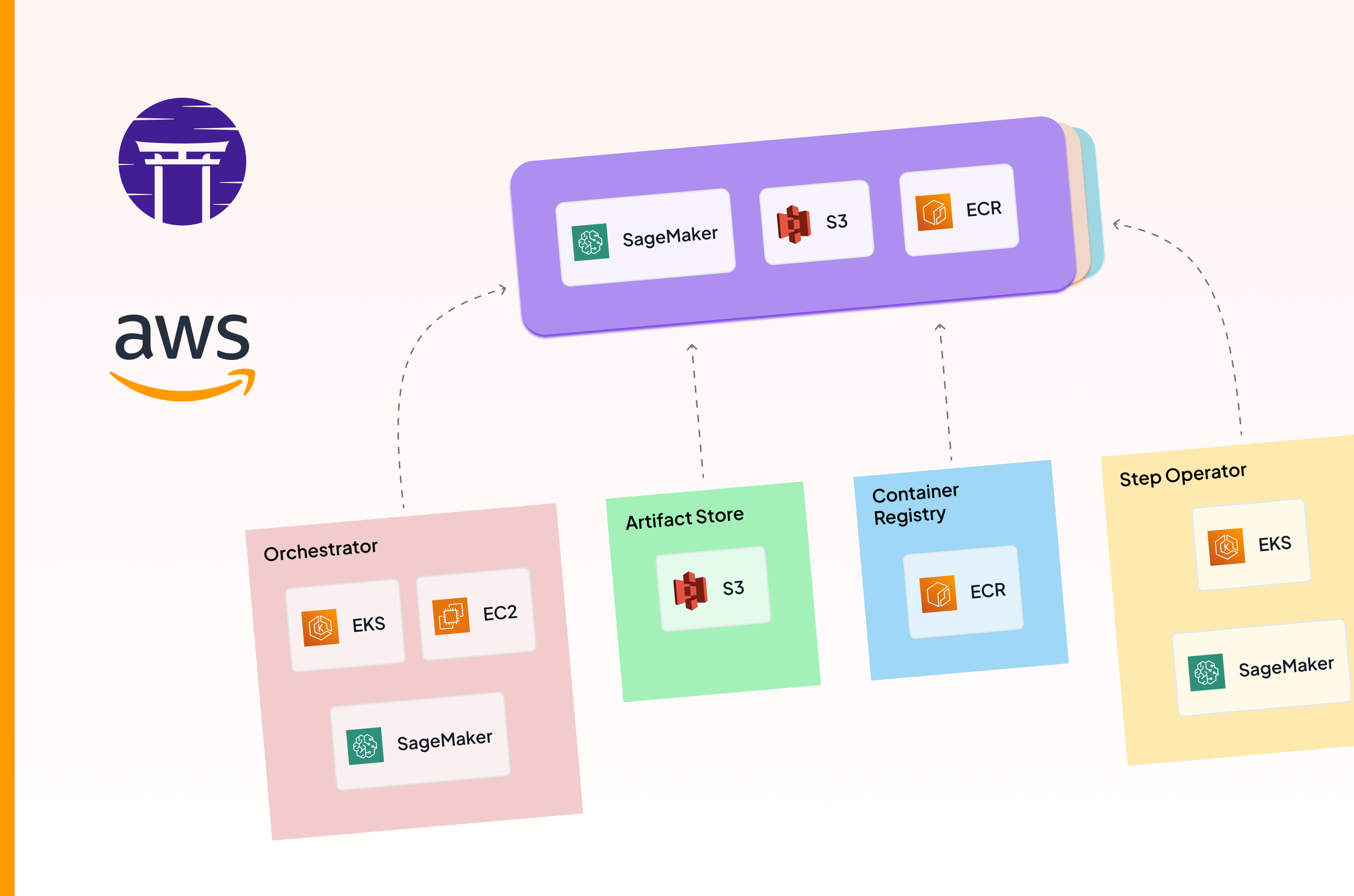

Machine Learning Operations (MLOps) is crucial in today's tech landscape, even with the rise of Large Language Models (LLMs). Implementing MLOps on AWS, leveraging services like SageMaker, ECR, S3, EC2, and EKS, can enhance productivity and streamline workflows. ZenML, an open-source MLOps framework, simplifies the integration and management of these services, enabling seamless transitions between AWS components. MLOps pipelines consist of Orchestrators, Artifact Stores, Container Registry, Model Deployers, and Step Operators. AWS offers a suite of managed services, such as ECR, S3, and EC2, but careful planning and configuration are required for a cohesive MLOps workflow.

A new ZenML newsletter featuring Istanbul cooking adventures, faster docker builds, and more

Comparing Airflow, Dagster, and Prefect: Choosing the right orchestration tool for your data workflows.

The combination of ZenML and SkyPilot offers a robust solution for managing ML workflows.

MLOps on Google Cloud Platform streamlines machine learning workflows using Vertex AI and ZenML.

Playing around with some genAI services and tools to create a story and comic that showcases the journey of MLOps adoption for a small team.

An overview of MLOps principles, implementation strategies, best practices, and tools for managing machine learning lifecycles.

ZenML's new direction: Simplifying infrastructure connections for enhanced MLOps.

OpenAI's Batch API allows you to submit queries for 50% of what you'd normally pay. Not all their models work with the service, but in many use cases this will save you lots of money on your LLM inference, just so long as you're not building a chatbot!

On the difficulties in precisely defining a machine learning pipeline, exploring how code changes, versioning, and naming conventions complicate the concept in MLOps frameworks like ZenML.

Exploring the evolution of MLOps practices in organizations, from manual processes to automated systems, covering aspects like data science workflows, experiment tracking, code management, and model monitoring.

Today, we're back to LLM land (Not too far from Lalaland). Not only do we have a new LoRA + Accelerate-powered finetuning pipeline for you, we're also hosting a RAG themed webinar.

We released an updated way to deploy MLOps infrastructure, building on the success of the `mlops-stack` repo and its stack recipes. All the new goodies are available via the `mlstacks` Python package.

The ZenML MLOps Competition ran from October 10 to November 11, 2022, and was a wonderful expression of open-source MLOps problem-solving.

Transform quickstart PyTorch code as a ZenML pipeline and add experiment tracking and secrets manager component.

Test automation is tedious enough with traditional software engineering, but machine learning complexities can make it even less appealing. Using Deepchecks with ZenML pipelines can get you started as quickly as it takes you to read this article.

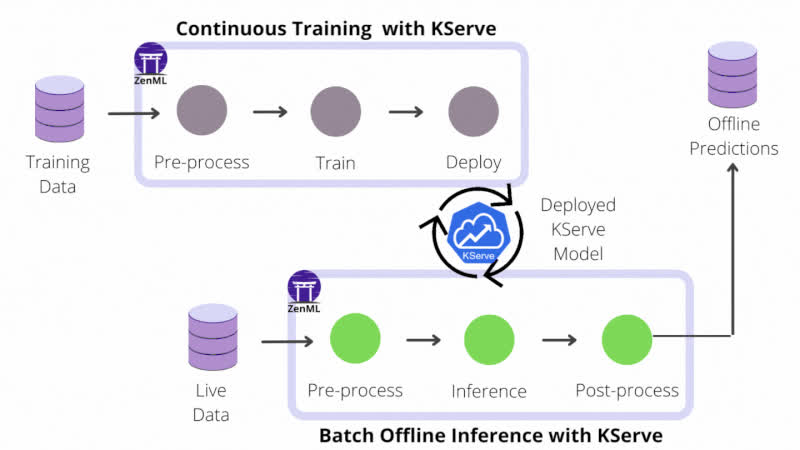

How to use ZenML and KServe to deploy serverless ML models in just a few steps.

ZenML combines forces with Great Expectations to add data validation to the list of continuous processes automated with MLOps. Discover why data validation is an important part of MLOps and try the new integration with a hands-on tutorial.

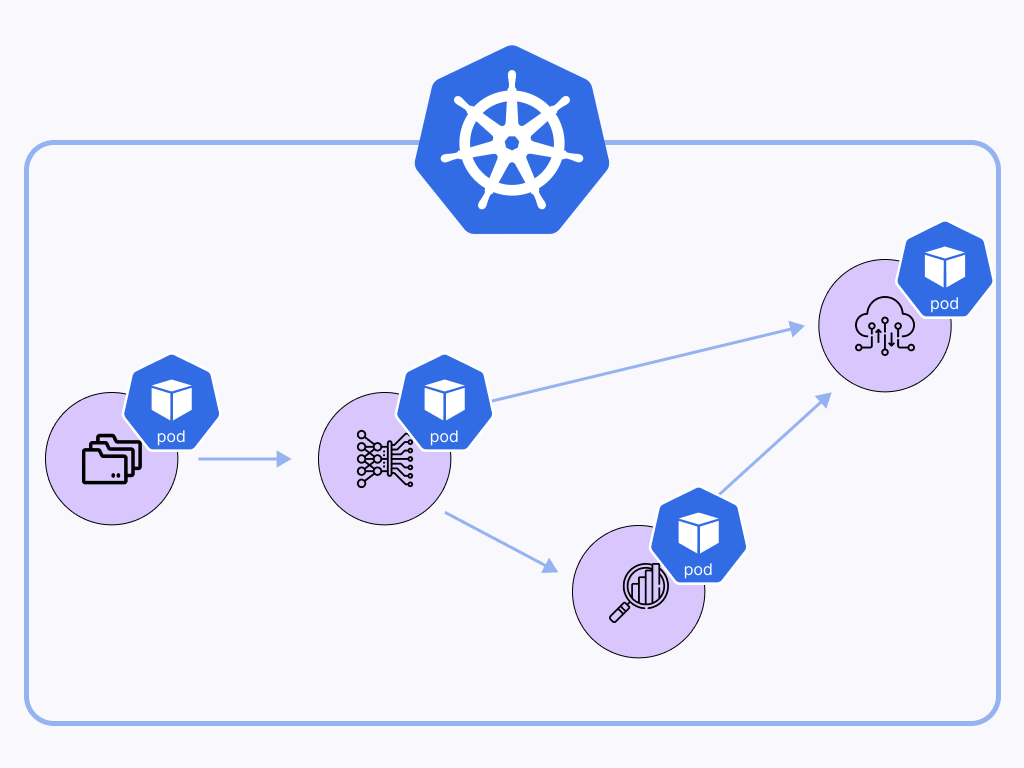

Getting started with distributed ML in the cloud: How to orchestrate ML workflows natively on Amazon Elastic Kubernetes Service (EKS).

How ZenML lets you have the best of both worlds, serverless managed infrastructure without the vendor lock in.

We put together a list of 48 open-source annotation and labeling tools to support different kinds of machine-learning projects.

This week I spoke with Ben Wilson, author of 'Machine Learning Engineering in Action', a jam-backed guide to all the lessons that Ben has learned over his years working to help companies get models out into the world and run them in production.

I explain why data labeling and annotation should be seen as a key part of any machine learning workflow, and how you probably don't want to label data only at the beginning of your process.

We built an end-to-end production-grade pipeline using ZenML for a customer churn model that can predict whether a customer will remain engaged with the company or not.

As our AI/ML projects evolve and mature, our processes and tooling also need to keep up with the growing demand for automation, quality and performance. But how can we possibly reconcile our need for flexibility with the overwhelming complexity of a continuously evolving ecosystem of tools and technologies? MLOps frameworks promise to deliver the ideal balance between flexibility, usability and maintainability, but not all MLOps frameworks are created equal. In this post, I take a critical look at what makes an MLOps framework worth using and what you should expect from one.

Connecting model training pipelines to deploying models in production is seen as a difficult milestone on the way to achieving MLOps maturity for an organization. ZenML rises to the challenge and introduces a novel approach to continuous model deployment that renders a smooth transition from experimentation to production.

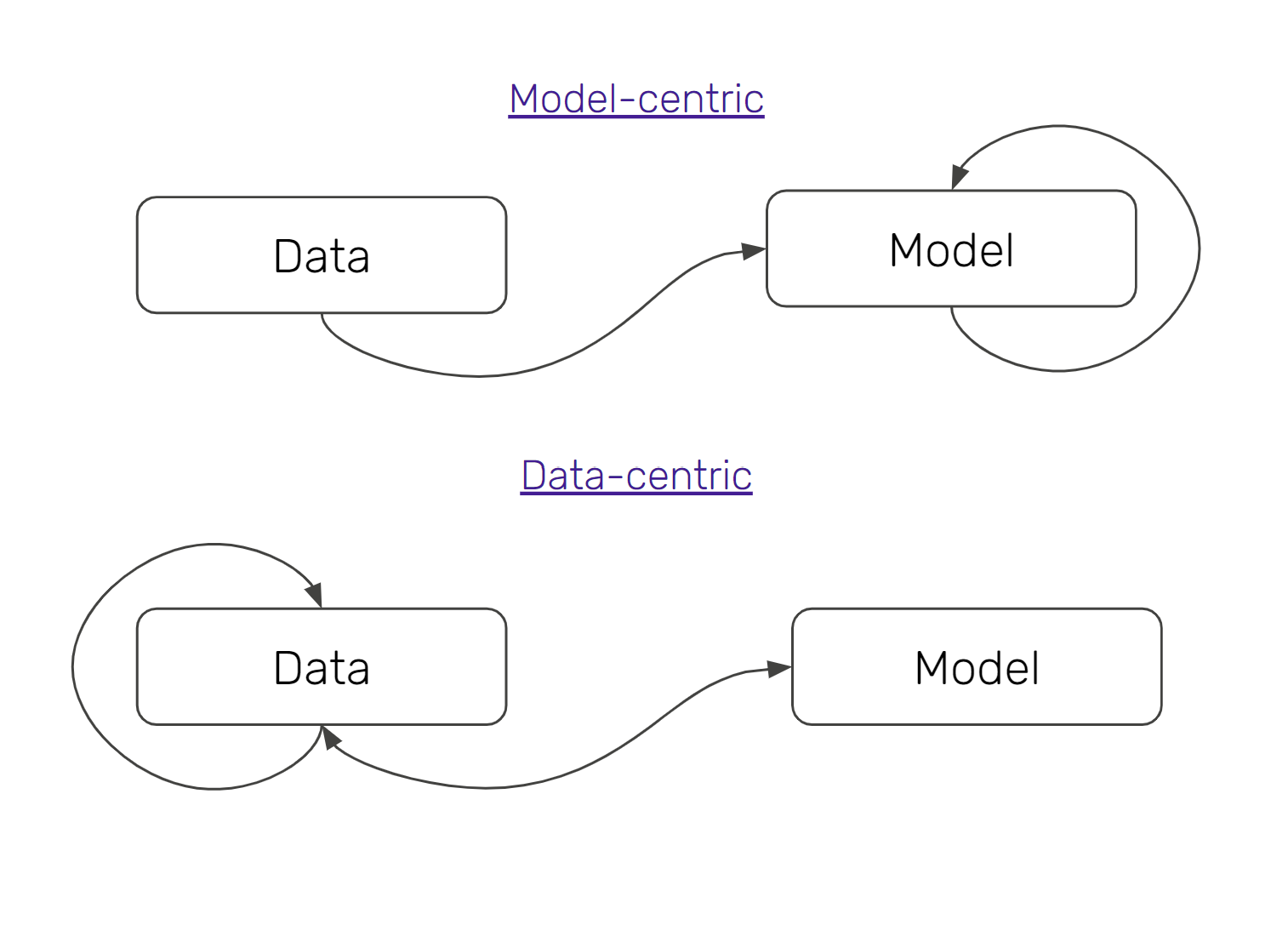

ML practitioners today are embracing data-centric machine learning, because of its substantive effect on MLOps practices. In this article, we take a brief excursion into how data-centric machine learning is fuelling MLOps best practices, and why you should care about this change.

This week I spoke with Matt Squire, the CTO and co-founder of Fuzzy Labs, where they help partner organizations think through how best to productionise their machine learning workflows.

This week I spoke with Emmanuel Ameisen, a data scientist and ML engineer currently based at Stripe. Emmanuel also wrote an excellent O'Reilly book called 'Building Machine Learning Powered Applications', a book I find myself often returning to for inspiration and that I was pleased to get the chance to reread in preparation for our discussion.

An exploration of some frameworks created by Google and Microsoft that can help think through improvements to how machine learning models get developed and deployed in production.

This week I spoke with Johnny Greco, a data scientist working at Radiology Partners. Johnny transitioned into his current work from a career as an academic — working in astronomy — where also worked in the open-source space to build a really interesting synthetic image data project.

Connecting model training pipelines to deploying models in production is regarded as a difficult milestone on the way to achieving Machine Learning operations maturity for an organization. ZenML rises to the challenge and introduces a novel approach to continuous model deployment that renders a smooth transition from experimentation to production.

Tristan and Alex discuss where machine learning and AI are headed in terms of the tooling landscape. Tristan outlined a vision of a higher abstraction level, something he's working on making a reality as CEO at Continual.

Mohan and Alex discuss neurosymbolic AI and the implications of a shift towards that as a core paradigm for production AI systems. In particular, we discuss the practical consequences of such a shift, both in terms of team composition as well as infrastructure requirements.

ZenML recently added an integration with Evidently, an open-source tool that allows you to monitor your data for drift (among other things). This post showcases the integration alongside some of the other parts of Evidently that we like.

We discuss how to monitor models in production, and how it helps you in the long-run.

Use caches to save time in your training cycles, and potentially to save some money as well!

We discuss the role of MLOps in an organization, some deployment war stories from his career as well as what he considers to be 'best practices' in production machine learning.

We launched a podcast to have conversations with people working to productionize their machine learning models and to learn from their experience.

Eliminate technical debt with iterative, reproducible pipelines.

MLOps isn't just about new technologies and coding practices. Getting better at productionizing your models also likely requires some institutional and/or organisational shifts.

![Why ML in production is (still) broken - [#MLOps2020]](https://assets.zenml.io/webflow/64a817a2e7e2208272d1ce30/79950cfa/65316d2bc051413294285f1e_mlopsworldthumbnail.png)

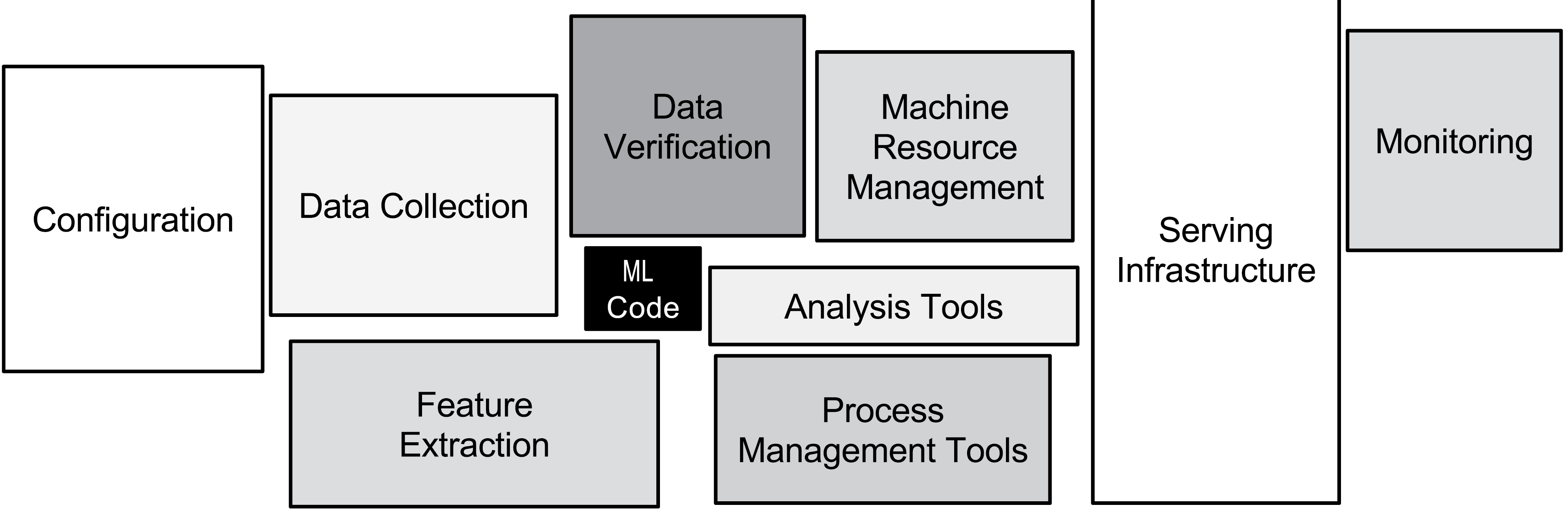

The MLOps movement and associated new tooling is starting to help tackle the very real technical debt problems associated with machine learning in production.

Using config files to specify infrastructure for training isn't widely practiced in the machine learning community, but it helps a lot with reproducibility.

Software engineering best practices have not been brought into the machine learning space, with the side-effect that there is a great deal of technical debt in these code bases.